Introduction

Integrating Oracle Cloud HCM Application to various external/internal applications is mission critical for overall enterprise applications, it enables seamless information exchange between systems for accurate transaction processing & reporting to make right business decisions, in this blog we will provide high level understanding of frequently used Integration patterns around Oracle Cloud HCM which can be incorporated into Integration strategy, we will be listing down various Integration Patterns (7 Inbound & 5 Outbound) which are used using Oracle PaaS [ OIC – Oracle Integration Cloud as Middleware, ATP – Autonomous Transaction Processing as Integration Database], this covers File(Batch), API’s (REST) & Webservices(SOAP) based Integrations.

The advantage of grouping the integrations in such a way (repetitive patterns) is that it allows for increased reuse, consistent and predictable behaviour, easier maintenance, and provide clarity to the stakeholders (including designers and developers).

These patterns were also being discussed in Oracle Webinar: Oracle Connect and Extend for SaaS: HCM session

Pattern Summary as below, you can refer to individual patterns in detail, these are written in way of increasing complexity of nature of requirements.

| No |

Mode |

Type |

Pattern Name & Use Case |

| 1 |

Inbound |

Batch/File |

|

| 2 |

Inbound |

Batch/File |

|

| 3 |

Inbound |

Batch/File |

Complex Transformations – Non-Cloud Format (High Volume Element Entry Retro Updates) |

| 4 |

Inbound |

Batch/File |

|

| 5 |

Inbound |

API |

Real Time (PUT, POST, PATCH, DELETE Operations with Transformations & Validations) |

| 6 |

Inbound |

API/Webservice |

|

| 7 |

Inbound |

API |

|

| 8 |

Outbound |

Batch/File |

|

| 9 |

Outbound |

Batch/File |

|

| 10 |

Outbound |

Batch/File |

|

| 11 |

Outbound |

API |

|

| 12 |

Outbound |

API |

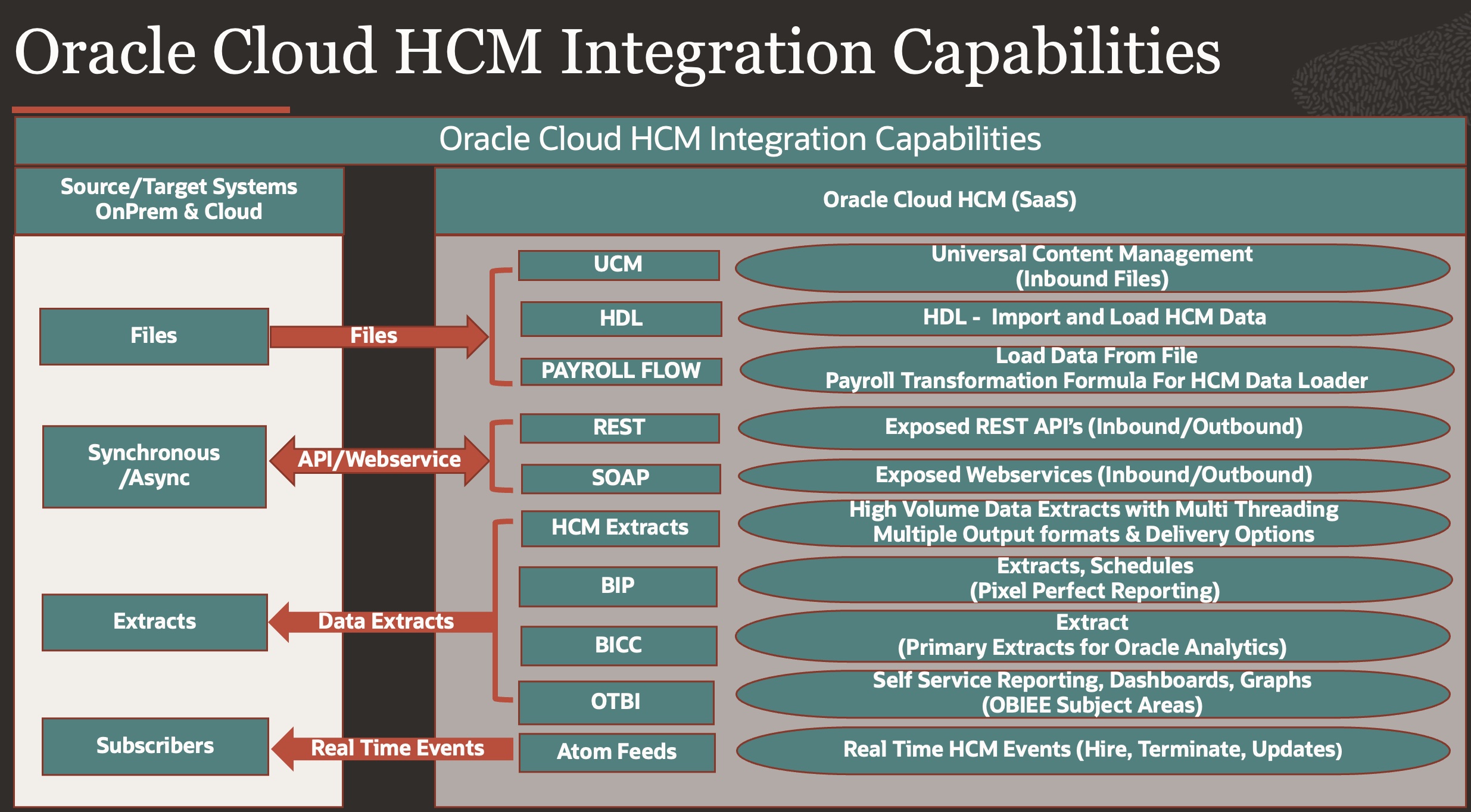

Oracle Cloud HCM Integration Capabilities

Oracle HCM Cloud has multiple capabilities for Batch & Real time Integration Scenarios, below diagram depicts product capabilities –

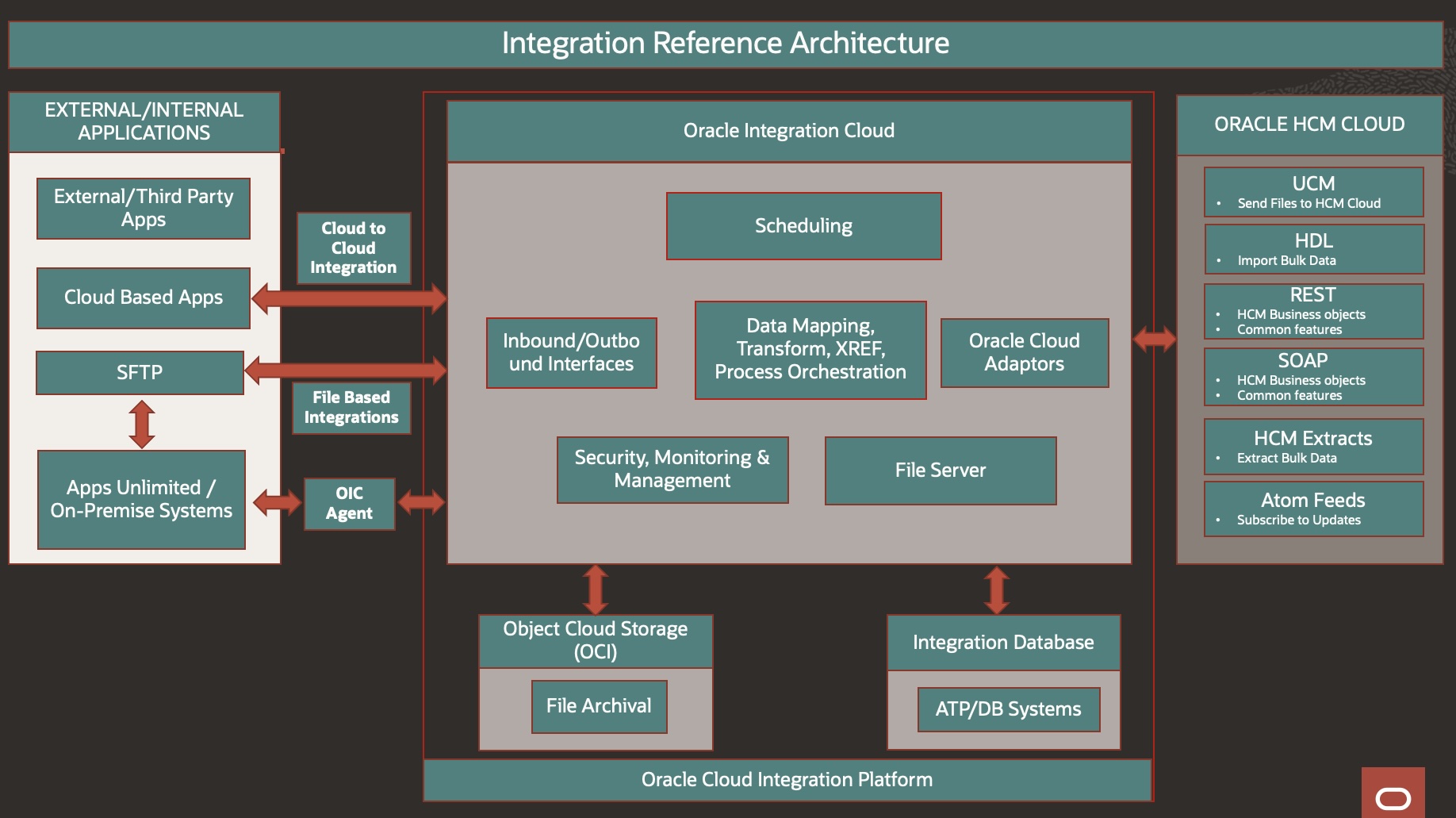

Oracle Integration Cloud

Oracle Integration Cloud Provides various adaptors to seamlessly integrate Oracle Fusion Cloud HCM Applications with External/Internal Applications –

Integration Patterns :

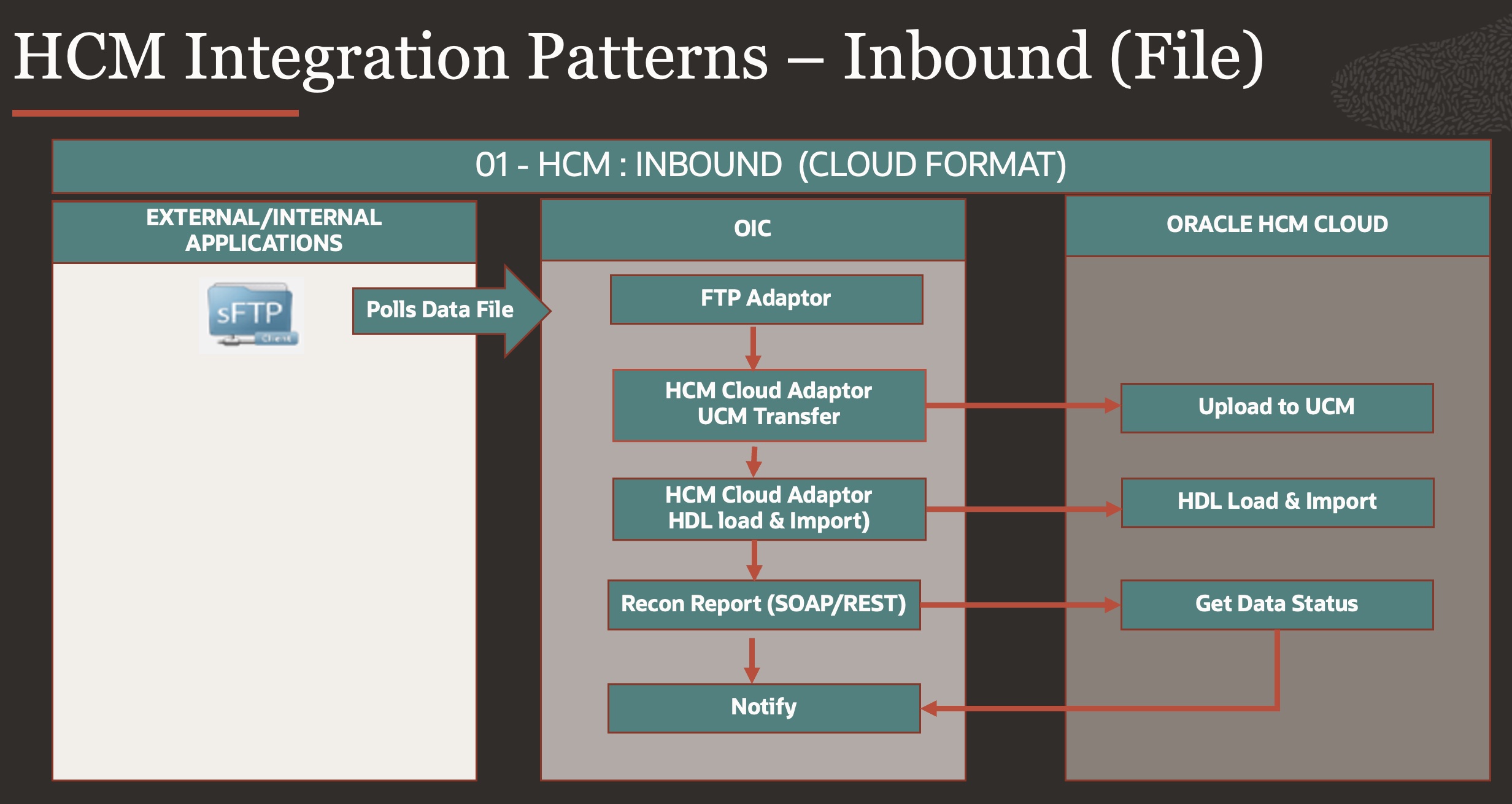

Pattern no 1: Inbound – File Based (Cloud Format – HDL)

This is the simplest Inbound pattern where External/Internal application has capability to send File in Cloud HCM format (HDL), Cloud HCM has HDL Process which can Consume files prescribed in certain format & import in Cloud HCM Base Tables.

HDL Process has capability to decrypt files using Key pairs shared.

OIC Process Will Pick the file from external FTP at Schedule time , upload in UCM & Kick Start HCM HDL process to load & import data.

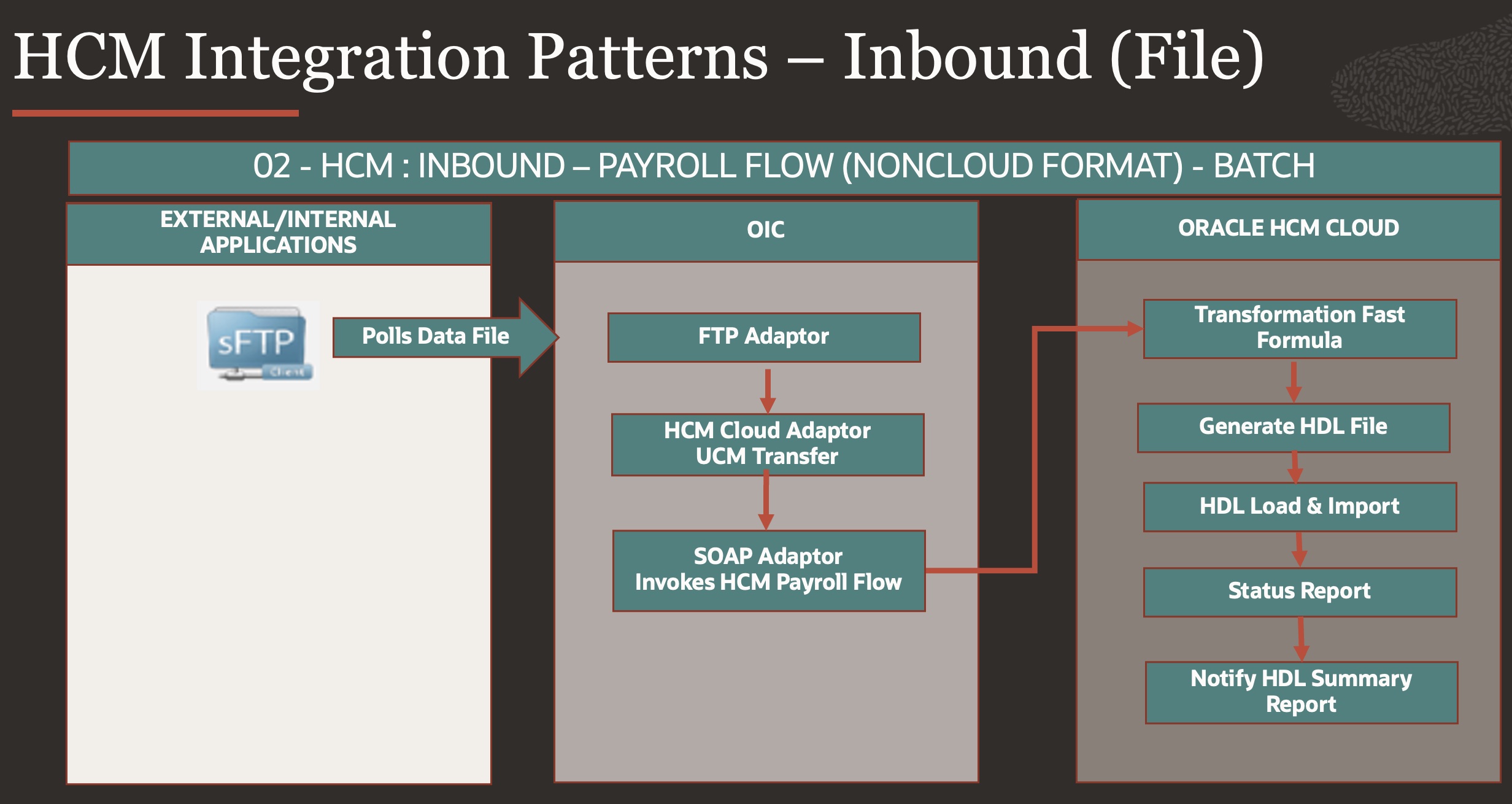

Pattern no 2: Inbound – File Based (Non-Cloud Format)

Now we will start increasing the complexity, when external/internal applications do not have capability to send files in cloud(HDL) format, this could be due to various reasons (Unavailability of Source Keys data from cloud, Lack of transformational capability) then Cloud HCM provides capability to Transform Non Cloud Files into Cloud HCM Format (HDL) using Transformation fast formula embedded inside Payroll flow which is Out of Box product of Oracle Cloud HCM & start HCM HDL flow to Import data in HCM Base table as depicted in Pattern 1 as one of payroll flow tasks.

Payroll flow has capability to Decrypt files so that Transformation FF can read encrypted files & do necessary transformation, refer Payroll Process Configuration Parameters.

This use case works very well for low/medium level transformations & validations with low or medium record volume, for High Volume file with lot of complex transformations/calculation this may give performance issue or may not work, we will discuss limitations of Payroll Transformation Formula in next pattern and possible workaround.

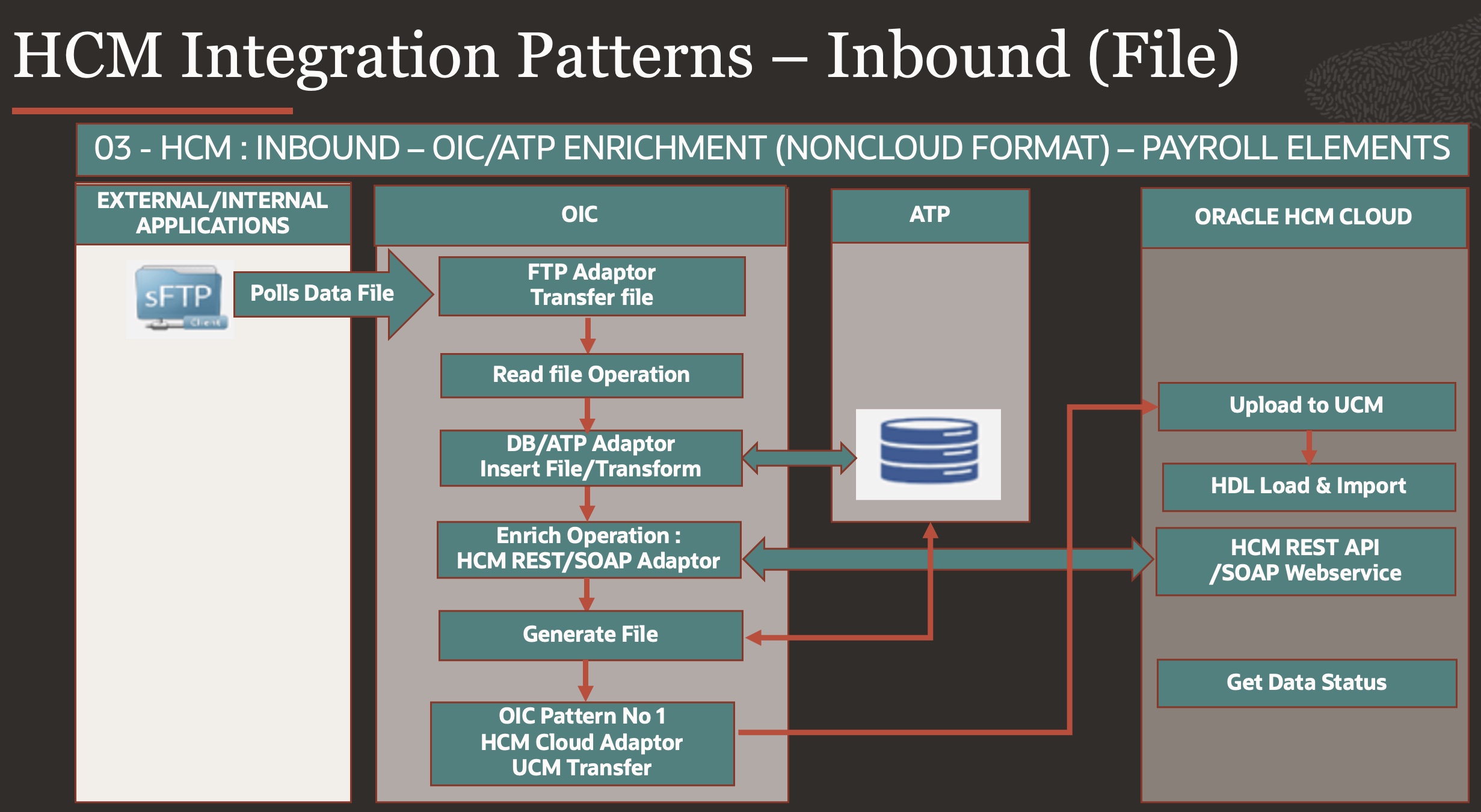

Pattern no 3: Inbound – File Based (Complex Transformations – Non-Cloud Format)

Payroll flow – Transformation Fast Formula, is excellent utility however it suffers from some limitations & performance issue when there is large data volume combined with very complex data transformation, few reasons listed –

- As of now .txt, .csv, .dat file read is supported in FF.

- FF can read files which has row content length less than 2000 Characters, if it is beyond it cannot read file column/row data.

- 1000-character limit is per formula expression, so if there is complex logic multiple FF has to be written and called inside parent FF, this makes implementation complex & non readable.

- GET_VALUE_SET function, when called from a fast formula, will return the “ID Column Name” defined in that value set. This return variable of the function is limited to 100 characters. If the content of the column to be returned is greater than 100 characters, it will return the blank value, to get more information , multiple value set calls has to be done on same data set with different string length, this makes very poor processing performance.

To overcome – we propose to add a layer or persistence (Database as Integration database) coupled with OIC as Integration layer, this provides scale and capability of complex volumetric transformation using Oracle database processing capabilities (Can write complex Database routines/packages and perform complex data transformation & file generation activities).

Payroll Retropay processing [Historical element/input value updates ] for high data volume is one such good example for this use case, as it has complex data transformation, enrichment & payroll retro processing is generally intensive computation operation.

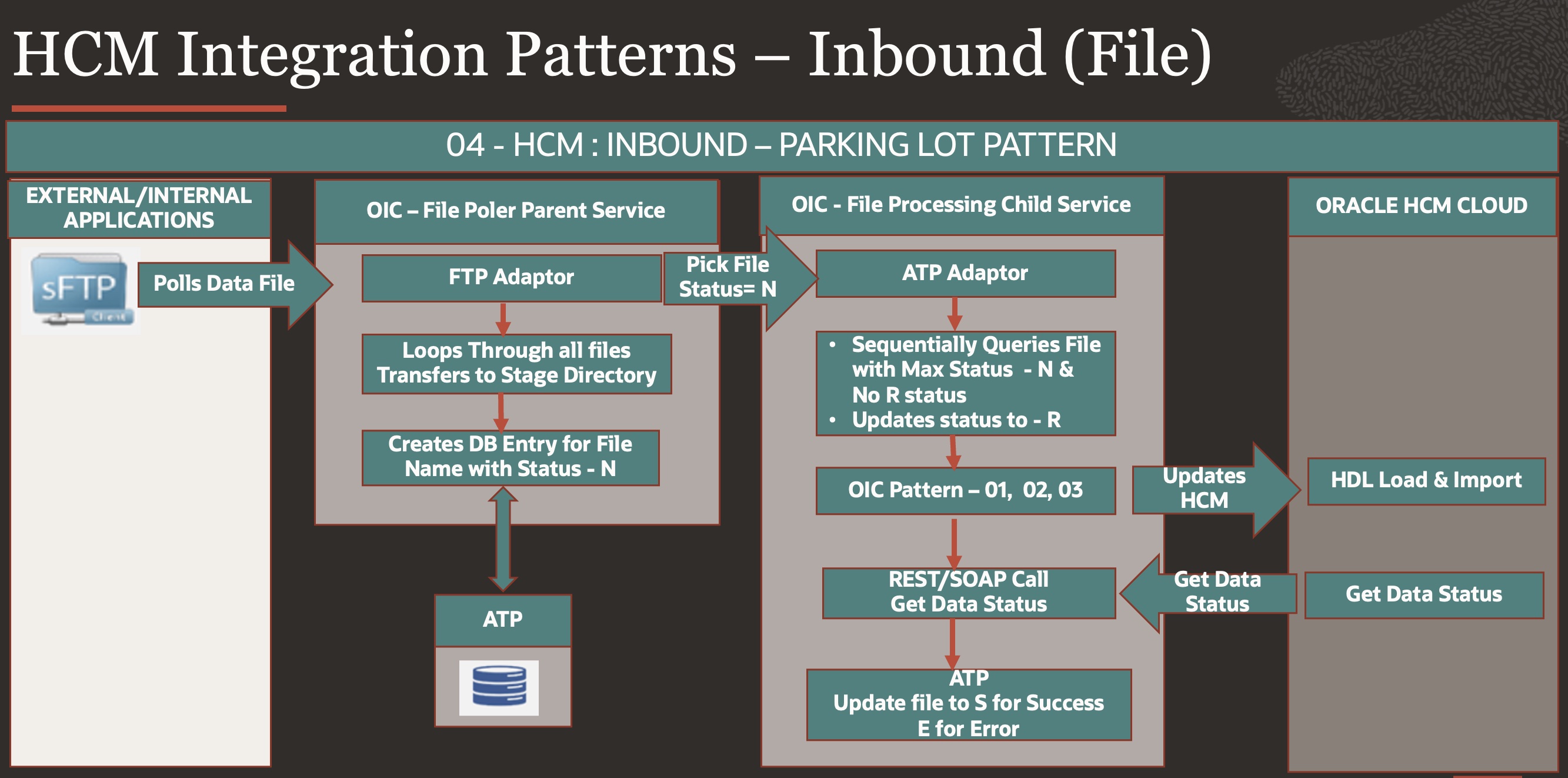

Pattern no 4 : Inbound – File Based (Parking Lot Pattern)

There is scenario where Source system is sending multiple files in short time like a burst of data files, while Target system Cloud HCM wants to process files sequentially in controlled way so that Cloud HCM is not overwhelmed & avoid flooding it, in such scenarios where producer & consumer have different produce & consumption rate – an intermediate storage is proposed so that there is control over how files/data are processed safely.

Here as depicted in above diagram – there are two OIC Process

- File Poler Parent Service

- This service will keep on poling data files from SFTP.

- OIC Scheduled process will Scan files in External SFTP directory & uploads files into Stage directory, this can be OIC SFTP or any other Internal SFTP server.

- OIC Process creates entry in ATP table with file name, Integration name, marks File status as New – N

- File Processing Child Service

- This Process will be scheduled to run on frequency defined let say 10 Minutes.

- Will Scan ATP table for new files, status – N, if No file in status R(Running) else it will ignore all files record.

- Pick Max of File records from Internal or OIC SFTP, so that only one most recent file is selected & changes status to R (Running), this is to ensure same file is not picked again in next run & sequential processing is done.

- Once this file is locked for processing, now we can process files using any of OIC Pattern 1, 2 or 3 discussed above.

- Once processing is complete then will mark file as S/E – processed or Error, so that next file can be picked & processed.

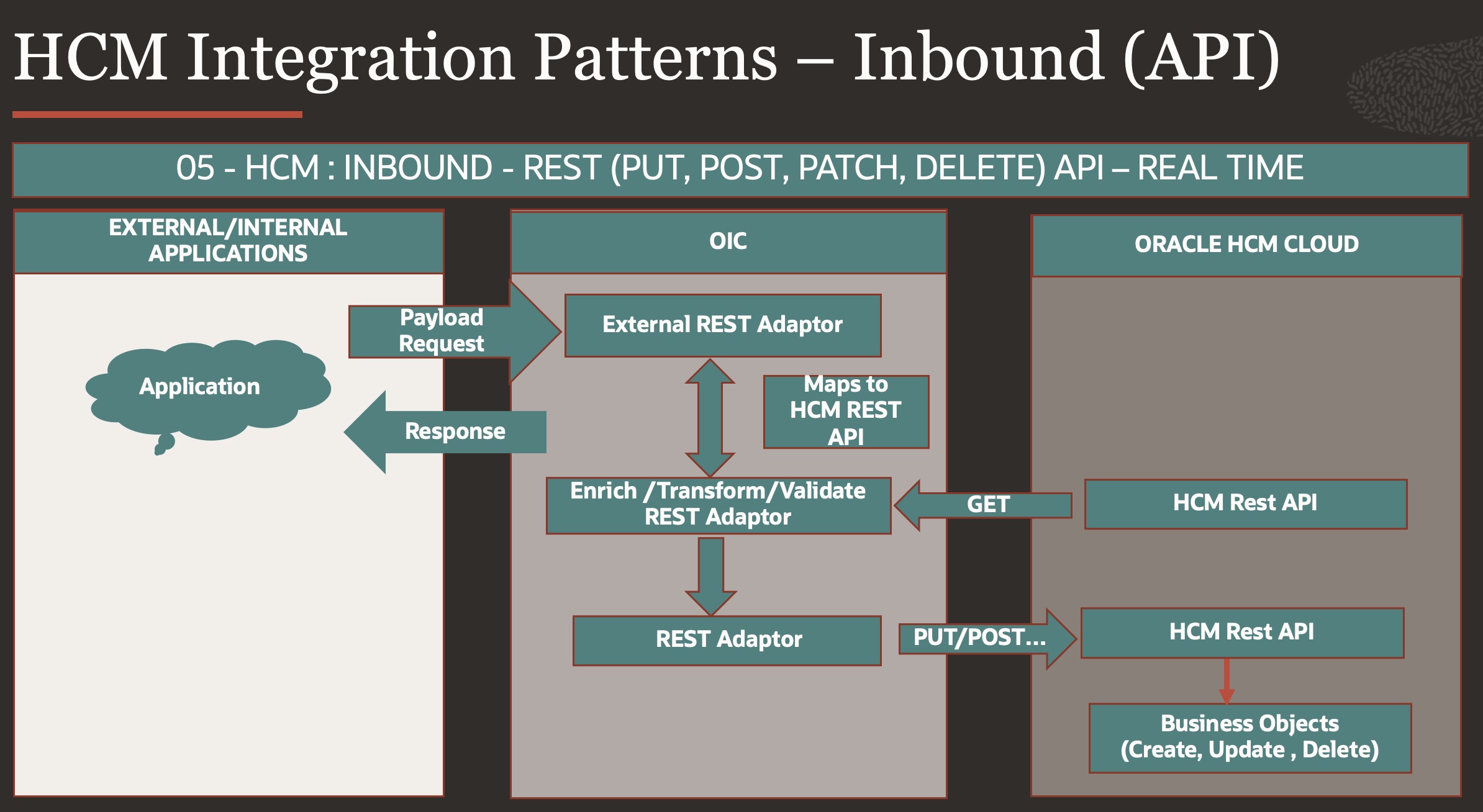

Pattern no 5 : Inbound – API (Real Time)

When we have more real time Inbound Integration requirement to update Cloud HCM data on real time, for eg..OTL time Entries [timeEventRequests] for punch in , Punch out Timings of employees then Cloud HCM provides API’s to get such transaction done synchronously.

However, using Cloud HCM API’s may not be straight forward for End Customer to use as there might be some source keys required for update or validation which needs to be performed before a transaction is done on system.

1.OTL is configured for Project & Payroll both, in this case External system needs to send both Person Number & Assignment Number, in this case external system may not have Assignment number details & have access to only to employee number, this would need a middleware(OIC) which would find active assignment number for that person, prepare payload before making call to OTL REST API for Punch IN & Punch OUT.

2.There may be certain Business validation written over Custom Attributes in Cloud HCM which is required to pass before making time entry, for e.g.… Security Compliance flag (any Custom process which make person Security compliant in system) or existence of an external identifier in core HR, once person meets that criteria only then he is allowed to do time entry over project or payroll.

3.There may be some mandatory columns which are configured in OTL Time entries. Project detail (PROJECT_ID, TASK_ID, Expenditure), either external customers would pass this information in OTL REST API, or this would need a middleware(OIC) to get this information to prepare payload and pass in time entry – timeEventRequests REST API for Punch IN & Punch OUT.

From Observability point of View, below needs to be considered.

4.Suppose OTL received 100 records & 10 records were errored, then to track 10 records for error details – prepare error report & sending notifications would need middleware (OIC).

5.From Security point of view – Organization may like to track any security event where a terminated employee is trying to do punch IN & is being denied as it is not active employee, notifications can be generated for such events.

In such cases OIC becomes a wrapper service hiding all complexity to external system and does all this enrichment, validations from cloud HCM before making final update & returning desired response.

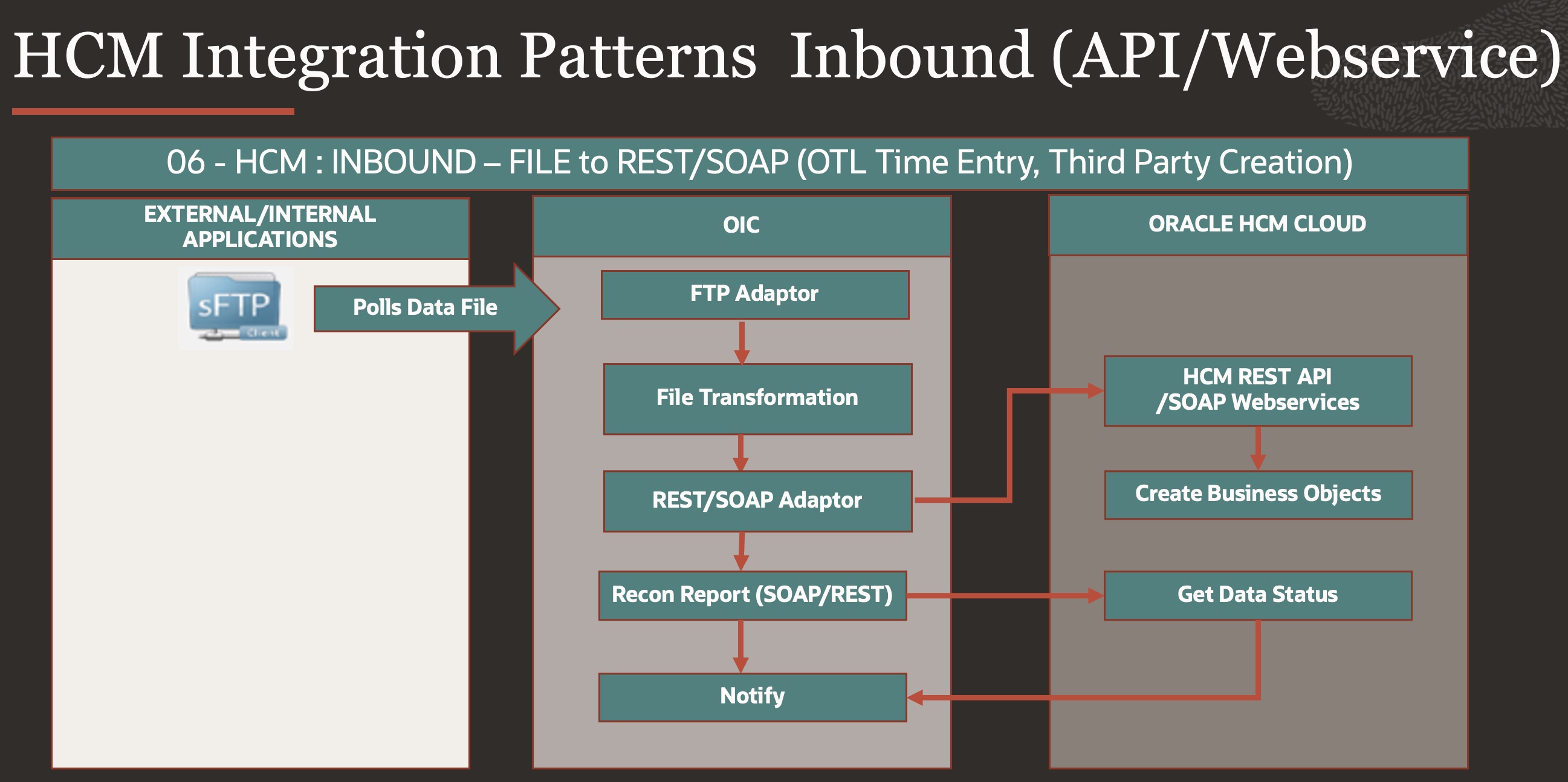

Pattern No 6 : Inbound – API (File to REST/SOAP)

This Pattern is very useful on scenarios where External system does not have capability to Invoke Cloud HCM API or Webservices & they would rather continue to send files for Consumption.

One such example can be – OTL Time entries [timeEventRequests], Cloud HCM Provides REST API to capture Punch IN , Punch OUT times which is real time entry, however External system send weekly or daily file data having time entries captured from there Time Entry Applications, so above pattern is very useful for such Integrations.

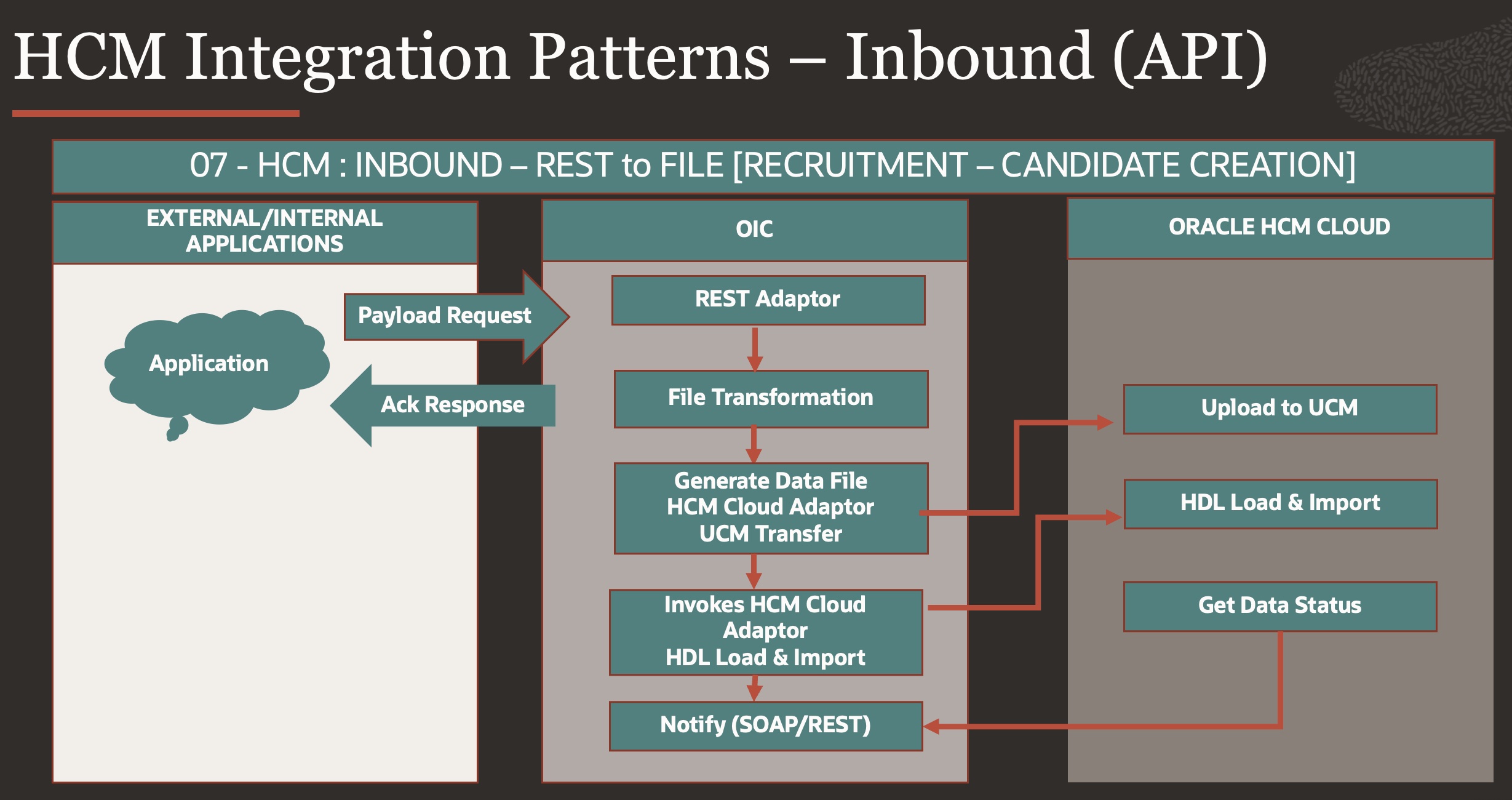

Pattern no 7: Inbound – API (REST to FILE)

In few cases Cloud HCM does not supports Business Objects a Public REST API’s, for ex…Candidate Job Application (recruitingCEJobApplications) & HDL File Method is the only Integration option but External system want REST methods to Process Candidate creation along with Candidate Job Applications.

Here OIC will provide External Application – REST Method & capture payload request, converts REST payload to HDL File & Submits HDL Process.

Since HDL will take some to process, OIC Process can return some correlation ID (HDL Process ID) as Acknowledgement response to calling External services & external system can Check for HDL status using getDataSetStatus HDL Soap operations.

For High Data Volumes or to have throttling control – an ATP can be used to capture external service payload & process as sequential HDL Load implementing parking lot pattern as depicted in OIC pattern no 4.

Pattern No 8: Outbound – File (HCM Extract)

This is most simple pattern for Outbound Integration using HCM Extract, Cloud HCM Provides HCM Extract as recommended utility for all file-based extracts, it has multiple capability from performance & Delivery Standpoint.

In one of most common use case, Oracle Cloud HCM needs to send Payroll file (Third Party NACHA/Direct Deposit, Cheque/Positive Pay) to Bank which follows certain file naming convection, File having Signed & then encrypted using Bank shared Keys, above Pattern can be used to generate such file extracts & transmits to Bank SFTP safely.

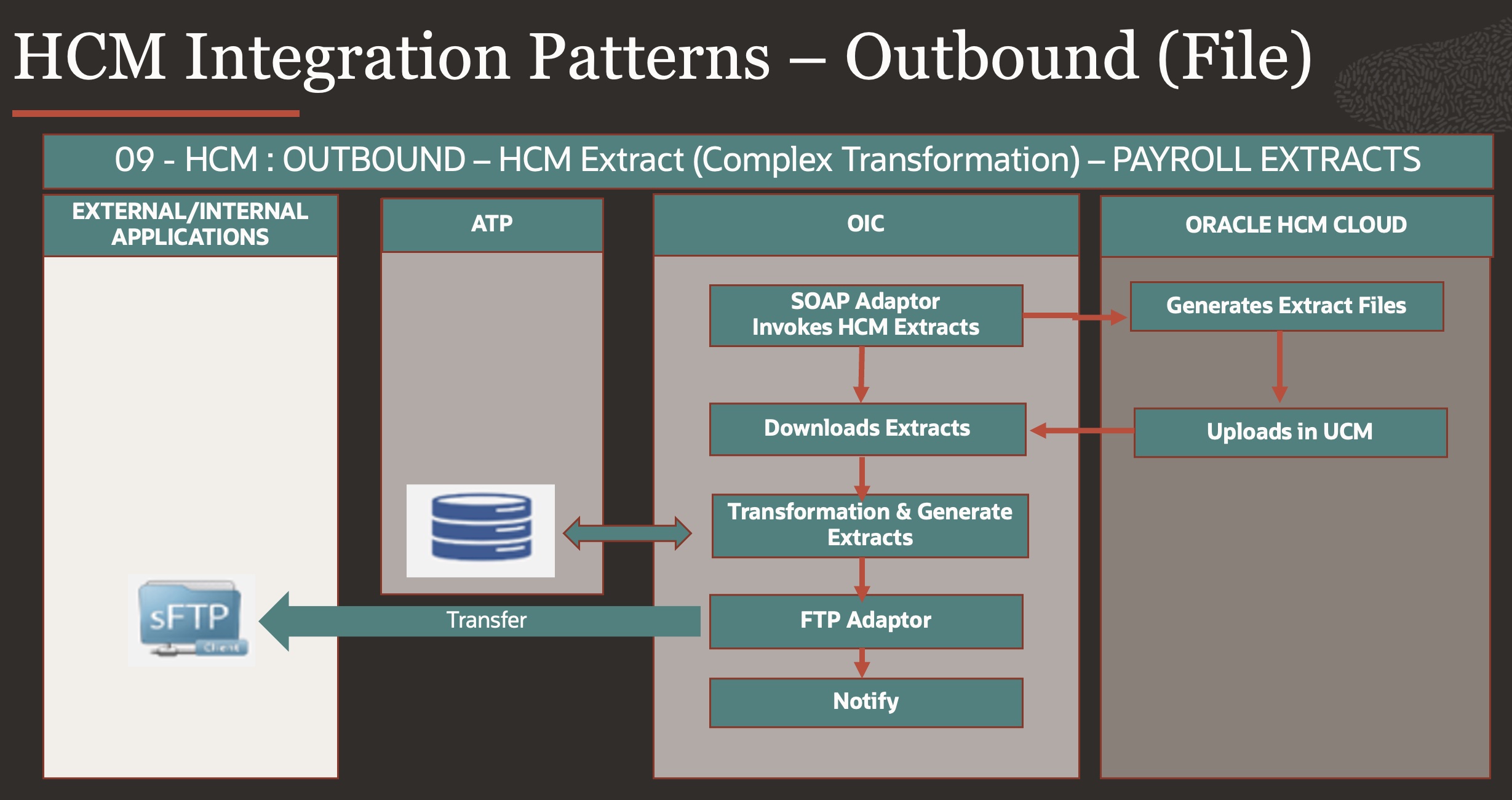

Pattern no 9: Outbound – File (Complex Reconciliation Reports)

There are few use cases where HCM extract cannot generate Extracts from Oracle Cloud HCM

- Due to complex calculation, complex file transformation.

- A-B or A+B operations over multiple files generated from HCM extracts to generate reconciliation reports – HCM extracts is generating multiple files & end process wants to have data from both files after Applying union or Minus operations over data from both files

- HCM extracts are driven from root UE, if there is requirement to bring data from multiple root UE e.g…if there is one set of data from Payroll coming from payroll related UE & one set of data from absence or benefits which has other UE, joining both these data in single extract file would become complex & non performant, in such cases two extracts can be written & data is uploaded in PaaS DB Tables and final extract files can be produced from DB using routines.

- There may be very complex business translation logic which is outside Cloud HCM, in such cases data has to be extracted from cloud HCM in to DB tables & then data massaging can be done with external business logic which is also stored in DB tables or written over DB Routines i.e.. data from multiple sources can be operated at one place and finally one extract file can be generated.

- There may be times where HCM extract cannot provide layout in provided format due to underlying extracted data (incorporating any pivot kind of logic in extract or template could cause severe performance degradation)

In Such cases – above patterns provides additional data persistence using Database, HCM extracts generates files and uploads in UCM, OIC Process download files loads it into ATP, inside ATP – transformation, calculation, A-B, A+B operations is done & then File is generated & transmitted to External SFTP.

Payroll data Extracts is one such use case where hcm extracts is generating multiple files & desired extract file is culmination of data from all such files – eg..transaction summary & details in one extract.

Pattern no 10: Outbound – File (Atom feed)

Oracle Cloud HCM Provides capability to external system to subscribe to real time events like Hire Employee, Terminate Employee etc… as part of Atom Feed.

Not all business objects are supported in Atom feed (Mostly around Core HR) but is extremely beneficial for external system to subscribe & listen to such events happening at Oracle Cloud HCM which can initiate real time changes at downstream application ex. New Hire sync, Alarms to emergency systems.

Since Atom feed are designed over Business Objects, for end usage sometimes external systems wants collated data feed as one extract file over multiple atom feeds for e.g.. New Employee Hire – Person Number, Job Name, Department Name, Payroll Name etc… In such cases OIC Process becomes Subscriber to Atom feed and does all data transformation, data union to provide one near real time Extract file feed to external systems in short intervals.

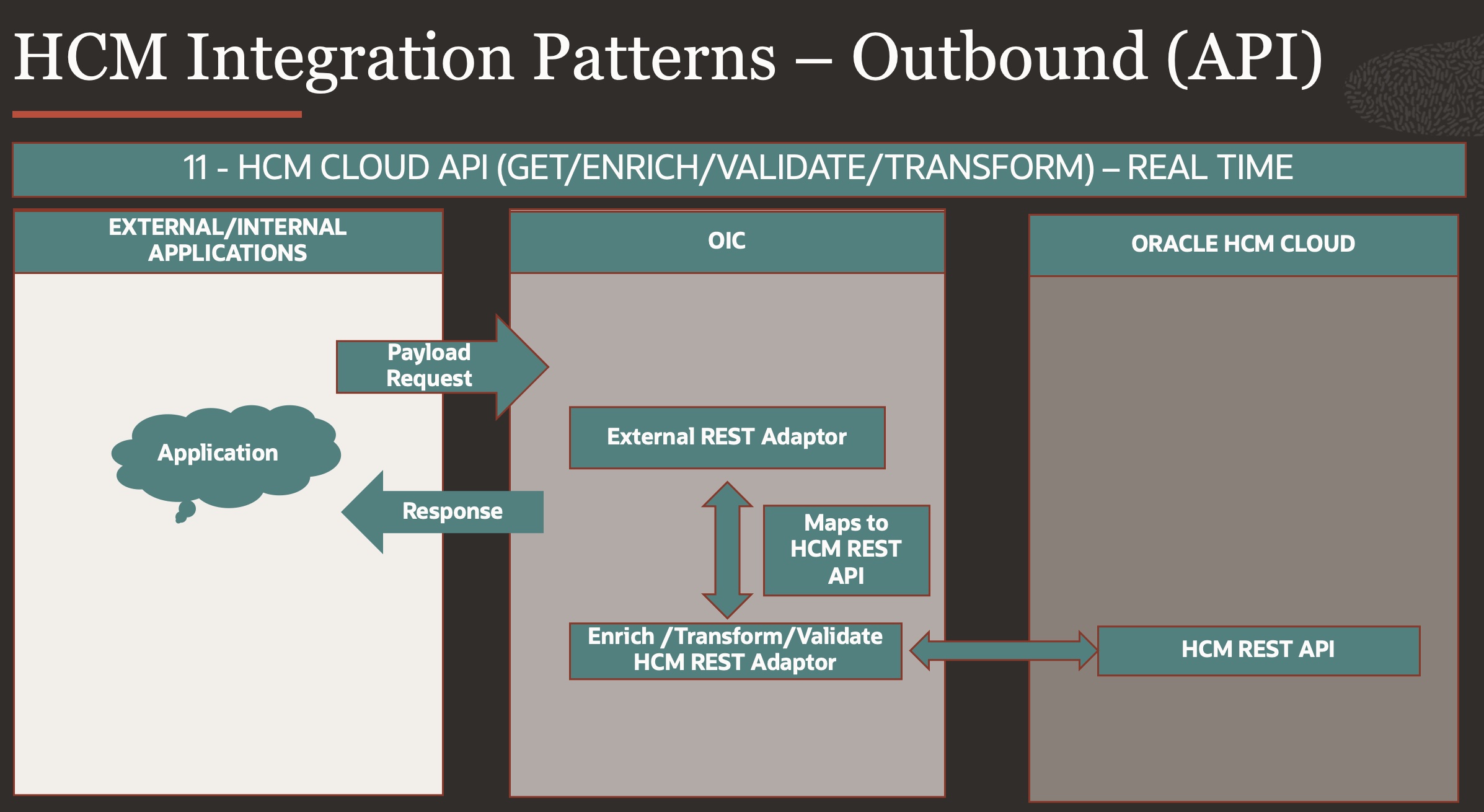

Pattern no 11: Outbound – API (Real Time)

This is real time Outbound pattern which is based on Cloud HCM API’s – External system can directly call Cloud HCM API’s however using those API’s directly can be little tricky since APIs bring lot of column data around one BO & external/internal system would like to have view from multiple BO’s consolidated as one response.

For e.g… External application wants to know Person details along with Absence (Leaves) or benefits data (benefits plan enrolled for year) for one person Number, this kind of data is not available under one rest end point.

In such Cases OIC becomes a wrapper Service to External System which does all this merge, transformation, Validation over multiple cloud HCM API’s & produce one response over provided Input Payload having query parameters.

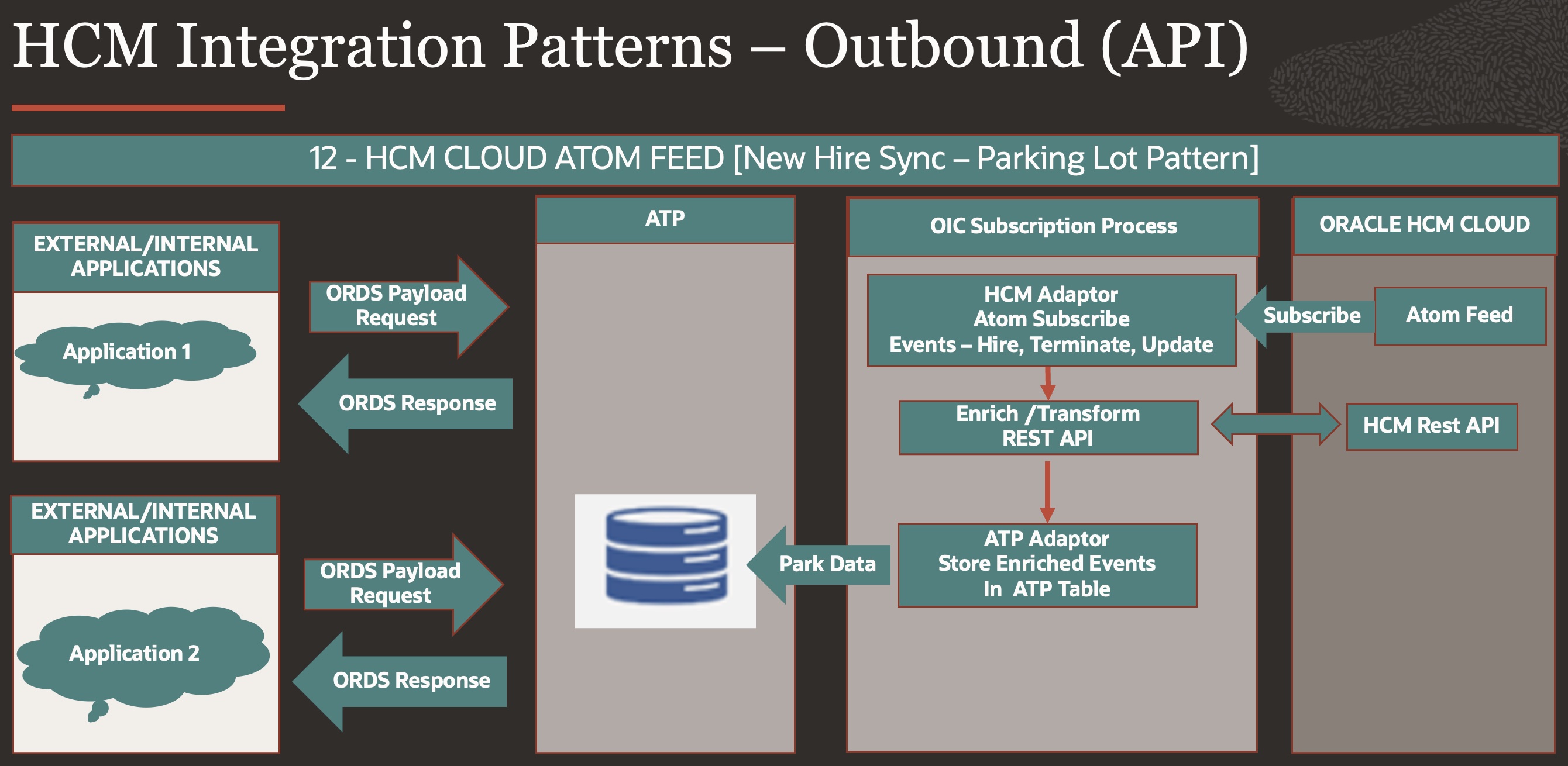

Pattern no 12: Outbound – API (Atom Feed – Parking lot Pattern)

This use case depicts Atom feed Subscription by Multiple External system over same data set view, this uses database – ATP as persistence layer to provide one external view & control throttling using parking lot pattern.

There are Several advantages of using this pattern over conventional Atom feed subscription directly by external systems.

- Multiple external systems can subscribe to one data view which has transformed ready data available to be consumed from ATP as ORDS REST service.

- Multiple External systems have more control over throttling individually without choking Cloud HCM with multiple repeated queries.

- ATP Can be used to query over Parking lot tables as ORDS rest queries & also update message publish status as one column for end-to-end integration tracking, this gives full control over integration error handling & debugging.

Conclusion

This blog showed Integration Patterns readily used across Oracle Cloud HCM Integration projects showcasing use case with each of them, Using these base patterns common services can be built around with configuration parameters or metadata(OIC Lookup, ATP Tables , VBCS Business Objects ) to make it more configurable & repeatable so that once a common service is established with configuration parameters/metadata, multiple integration can be rolled out easily– Integrations as Configurations Approach.

For E.g…Inbound Pattern 1 & 2 can be easily configured with Metadata as File Name, SFTP Directory, SFTP Connections details, Payroll flow Name, Incoming Parameters as string, Similarly for Outbound pattern 8 – Metadata like HCM extract Name, Output file name, Encryption & Signing details, Target SFTP details can be used to build multiple integration out of them.

For Rest of Patterns – elaborate metadata has to be planned out which can capture more details like – DB Routine Name, REST End Points, Unique Transformational Integration Service ..etc which are required to make common services around them, the design philosophy is –

” Whatever is repeatable in nature must not be recreated every-time rather than re-used with configurations & whatever is unique should be pushed to – Unique Transformation Integration Service for that Integration & that Unique service again becomes one of Configurations for Common Service”.

You can also use these as base patterns like Lego building block and create more complex pattern around them for more complex business requirement (Bi-Directional Integrations).

For e.g.. Let’s suppose If an Employee is Hired in Cloud HCM then this data should be sent to External System to generate Organisation wide ID used across all Enterprise applications in that organisation & then this ID should be updated back to Cloud HCM as External Identifier for Employee – Here we will design for Outbound Integration using Pattern no – 10 or 12 (In case of Multiple Subscriber – Multiple External Identifiers) & Inbound Using Pattern – 01, 02 on basis of External system ability to send HDL File or not, Pattern No – 5 can also be used by external system for real time updates.

I hope with this blog you have more clear understanding of HCM integration use cases and methodology of Integration.

References :

Below are some references which are handy in implementation of Oracle Cloud HCM Integration services using Oracle PaaS (OIC/ATP)

Note – Always refer to latest documentation.

- Set up Encryption for File Transfer

- OIC HCM Adaptor

- OIC Service Limits

- OIC Integration Styles

- HCM HDL Loader

- Payroll Transformation Formula For HCM Data Loader

- Payroll Process Configuration Parameters

- HCM RESTS API’s

https://docs.oracle.com/en/cloud/saas/human-resources/23b/farws/index.html

- HCM SOAP Webservices

https://docs.oracle.com/en/cloud/saas/human-resources/23b/oeswh/index.html#COPYRIGHT_0000

- Reporting and Data Extracts

https://blogs.oracle.com/fusionhcmcoe/post/reporting-and-data-extracts

- Oracle HCM Integration Patterns and Best Practices