One of the more interesting follow-up questions after the Oracle Database at AWS networking update is not whether direct multi-VPC ODB peering is supported. It is what to do if you already built around the earlier, more constrained model.

A very common starting point looks like this: one ODB network is peered to one transit VPC, and that VPC is attached to AWS Transit Gateway. The transit gateway then extends connectivity to other VPCs. That pattern is still valid, and AWS documentation continues to support it. But now that Oracle Database at AWS allows one ODB network to peer directly with multiple VPCs, some customers will want to simplify part of that design without tearing everything down.

For the ODB network workloads to exit to the TGW via the transit VPC, currently, there is a constraint for the TGW to have only one attachment in a subnet in the same AZ as the ODB network. A common landing zone for the customers is to create redundancy for the applications and spread them across multiple AZs. The same logic is followed by the TGW attachments.

Because an already provisioned application VPC with workloads spread across multiple AZ with TGW connectivity can’t be used as a transit for the ODB, the reference architecture includes a Transit VPC which has only one TGW attachment. In practical terms, the migration target is straightforward: keep the Transit VPC attached to the transit gateway if it still serves a useful purpose but move selected application VPCs from indirect database access through TGW to their own direct ODB peering connections.

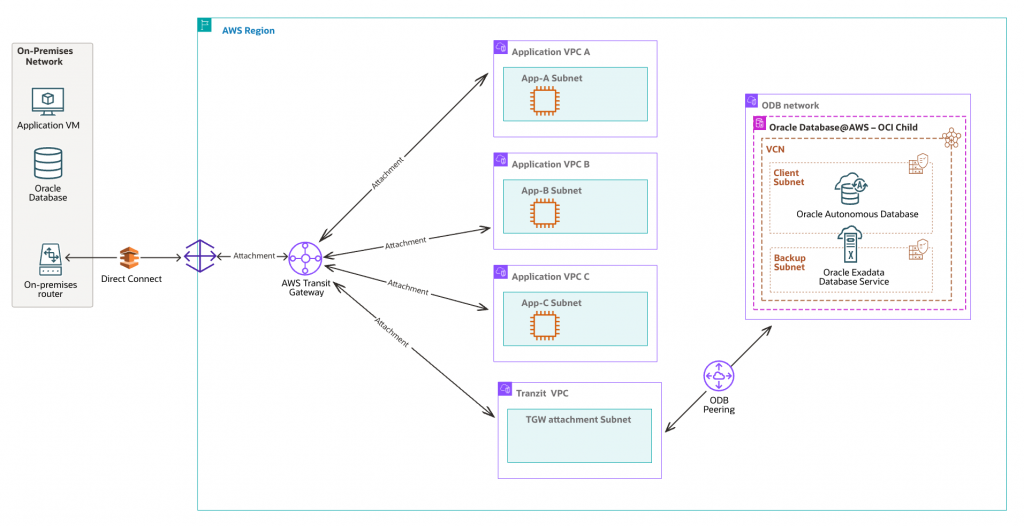

Starting Architecture

The starting architecture usually has three characteristics:

- One ODB network is peered directly with one VPC.

- That first VPC, commonly named Transit VPC, has a transit gateway attachment.

- Other application VPCs reach the ODB network indirectly through the transit gateway and the Transit VPC.

This architecture made sense when direct peering options were narrower. It still makes sense when centralized routing is a deliberate choice. But if the goal is simply to let several application VPCs reach the same ODB network, the newer direct-peering model can reduce routing indirection and operational complexity.

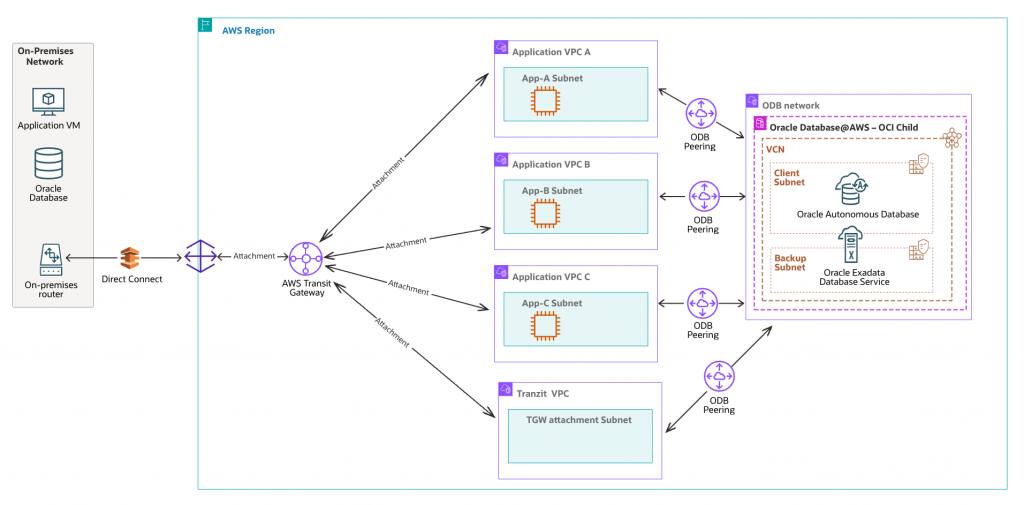

Target Architecture

The target architecture does not have to be a full replacement of TGW. That is an important point.

You can keep the original VPC connected to the transit gateway while also creating direct ODB peering connections from the same ODB network to additional VPCs. In other words, the migration is not necessarily “TGW out, direct peering in.” It can be “direct peering where it helps, TGW where it still makes sense.”

What to Check Before You Start

Before changing anything, validate the basic constraints.

- Confirm that the ODB network can support additional peering connections.

- Check that CIDR ranges do not overlap between the ODB network and every VPC you plan to peer.

- Review whether your ODB network was created before February 7, 2026 in US East (N. Virginia) or US West (Oregon), because older networks in those regions require recreation before adding more than one ODB peering connection.

- Confirm whether the original TGW-connected VPC still needs to act as a transit hub for other networks or on-premises paths.

- Document the current route tables and DNS settings before making any changes.

It is also worth deciding up front which VPCs should remain behind TGW and which ones should be moved to direct ODB peering. Not every spoke VPC has to migrate at once.

Step-by-Step Migration

Step 1: Inventory the Current Traffic Path

Start by identifying which application VPCs currently reach the ODB network through TGW. For each VPC, capture the subnets involved, the route tables used, the application source CIDRs, DNS behavior, and the destination databases or services in the ODB network.

This gives you a clean baseline and helps avoid moving VPCs that still depend on TGW for reasons beyond database connectivity.

Step 2: Decide Which VPC to Migrate First

Do not start with the most critical VPC unless there is a strong reason to do so. Pick a low-risk application VPC that has clear routing and well understood connectivity requirements.

The point of the first migration is to validate the pattern, not to prove bravery.

Step 3: Create a Direct ODB Peering Connection for the Target VPC

Create a new ODB peering connection between the existing ODB network and the application VPC you want to migrate. If needed, specify peer network CIDRs so you limit access to the exact subnets that should talk to the ODB network.

This is the core change. Once the peering exists, the target VPC no longer needs to rely on the transit path just to reach the databases.

Step 4: Update Route Tables in the Newly Peered VPC

After the peering connection is created, update the route tables in that VPC so traffic destined for the ODB network goes directly to the ODB peering connection instead of toward TGW.

This step is where the migration becomes real. Until the route tables change, the new peering is just potential.

Step 5: Validate DNS and Connectivity

Test application connectivity from the migrated VPC to the target Oracle databases. Validate name resolution, connection strings, latency, security controls, and any database client assumptions that may have been built around the previous transit path.

In many environments, the routing change is simple but DNS validation is where operational surprises appear.

Step 6: Remove the Old ODB Route Through TGW for That VPC

Once the direct path is validated, remove or retire the older route entries that sent this VPC’s ODB-bound traffic through TGW. This avoids ambiguous routing and makes the final architecture easier to understand.

Keep the TGW attachment itself if the VPC still needs it for other traffic patterns. The goal is not to remove TGW blindly. The goal is to remove unnecessary dependence on TGW for Oracle Database at AWS access.

Step 7: Repeat VPC by VPC

Repeat the same process for each additional application VPC that should move from indirect TGW-based access to direct ODB peering. Because each peering connection is its own resource, the migration can be incremental.

This is one of the nicest aspects of the new model. You do not need a big-bang network cutover.

Step 8: Reassess the Role of the Original TGW-Attached VPC

After a few VPCs move to direct peering, revisit the purpose of the original VPC that remains attached to TGW. It may still be valuable as a central transit point for on-premises access, shared services, or VPCs that are not good candidates for direct peering.

Or you may discover that its transit role can be reduced over time.

What Changes Operationally?

The most visible change is that routing becomes more explicit. Applications in directly peered VPCs reach the ODB network through their own dedicated ODB peering connection. That reduces the amount of “hidden” network dependency on the TGW-connected VPC.

The second change is that peering lifecycle management becomes more granular. Each application VPC can now be added, updated, or removed as an independent peering relationship, rather than as a spoke behind a single transit-based database access path.

When Not to Migrate

Not every environment should rush toward direct peering everywhere.

- If centralized routing and inspection are a hard requirement, TGW may still be the cleaner control point.

- If your applications already depend on broad shared connectivity beyond Oracle database access, the transit pattern may still be the right anchor.

Practical Closing Advice

If I were planning this migration, I would not frame it as a network redesign project. I would frame it as a dependency-reduction exercise.

Migrate one VPC at a time. Validate routing and DNS carefully. Keep TGW where it still adds value. Remove it only where it no longer justifies the extra indirection.

That is the real value of the new Oracle Database at AWS networking model. It lets us simplify selectively, instead of redesigning everything at once.