Introduction

Many AWS networking architectures today are built around AWS Transit Gateway (TGW), which provides a scalable hub-and-spoke model for connecting multiple VPCs, hybrid environments, and shared services eliminating the need for complex and unscalable point-to-point VPC peering connections; by allowing each VPC or network to attach to a single TGW, it simplifies network topology and provides centralized routing control.

While TGW is often documented purely from an AWS networking standpoint, it is important to reframe these architectures through the lens of Oracle Database@AWS (DB@AWS) and how it integrates into real-world enterprise designs.

This blog provides an architectural perspective on how DB@AWS fits naturally into common AWS TGW-based scenarios, enabling customers to modernize application architectures while continuing to leverage Oracle Database services.

How DB@AWS Changes the Conversation

Traditional AWS networking discussions focus on connecting application tiers and generic databases. With DB@AWS, the database tier becomes a first-class, Oracle-managed service running within AWS, bringing:

- Native Oracle Database capabilities (Exadata-based performance, Data Guard, RMAN)

- Tight integration with AWS networking constructs (VPCs, TGW, Direct Connect)

- Reduced need for complex cross-cloud architectures

As a result, TGW is no longer just a connectivity hub, it becomes the central fabric that enables secure, scalable access to DB@AWS from diverse application landscapes.

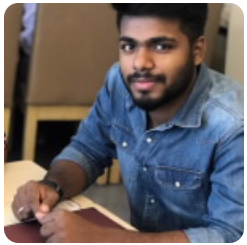

1. Centralized Connectivity for Application VPCs

The AWS Pattern

AWS TGW is commonly used to connect multiple application VPCs (dev, test, prod, different teams/accounts) in a hub-and-spoke model.

DB@AWS Perspective

In this model, DB@AWS acts as a shared database platform accessed by multiple application VPCs.

- Each application VPC attaches to TGW

- DB@AWS is exposed via dedicated VPC attachments

- Routing is centrally controlled using TGW route tables

Key Value

- Simplifies access to Oracle databases across multiple application domains

- Eliminates the need for duplicated database deployments per VPC

- Enables consistent security policies for database access

Practical Insight

Treat DB@AWS as a shared service tier rather than embedding databases inside each application VPC. TGW becomes the enforcement point for:

- Network segmentation (dev vs prod)

- Controlled east-west traffic

- Centralized inspection if required

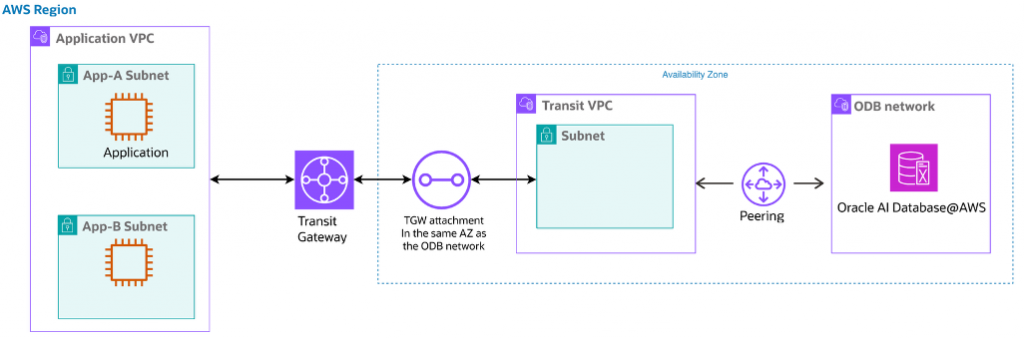

2. Centralized Security Inspection (Firewall VPC)

The AWS Pattern

Traffic from spoke VPCs is routed through a centralized firewall or inspection VPC using TGW.

(TGW) enables centralized connectivity by acting as a hub that connects all application VPCs to a dedicated inspection or firewall VPC, ensuring that all inter-VPC and outbound traffic is routed through a centralized security layer; instead of allowing direct VPC-to-VPC communication

DB@AWS Perspective

When DB@AWS is introduced, this pattern becomes critical for:

- Database access control

- Regulatory compliance

- Threat inspection for sensitive data flows

Architecture Alignment

- Application VPCs → TGW → Firewall VPC → TGW → Transit VPC → DB@AWS

- All database traffic is inspected before reaching DB@AWS

Key Value

- Enforces consistent security policies for all database access

- Provides visibility into SQL/application traffic patterns

- Supports compliance-heavy industries (finance, healthcare)

Practical Insight

Avoid direct VPC-to-DB@AWS access in regulated environments. Instead:

- Use TGW route tables to force traffic through inspection layers

- Configure TGW route tables so all east-west traffic (App ↔ DB) is routed via a dedicated firewall/inspection VPC, eliminating any direct spoke-to-spoke paths

- Enable appliance mode on TGW attachments for the firewall VPC to ensure symmetric routing, which is critical for stateful inspection and consistent policy enforcement

- Implement explicit route propagation and blackhole routes in TGW to prevent bypass of the firewall path and enforce least-privilege network access

This creates a defense-in-depth model, spanning network and database layers.

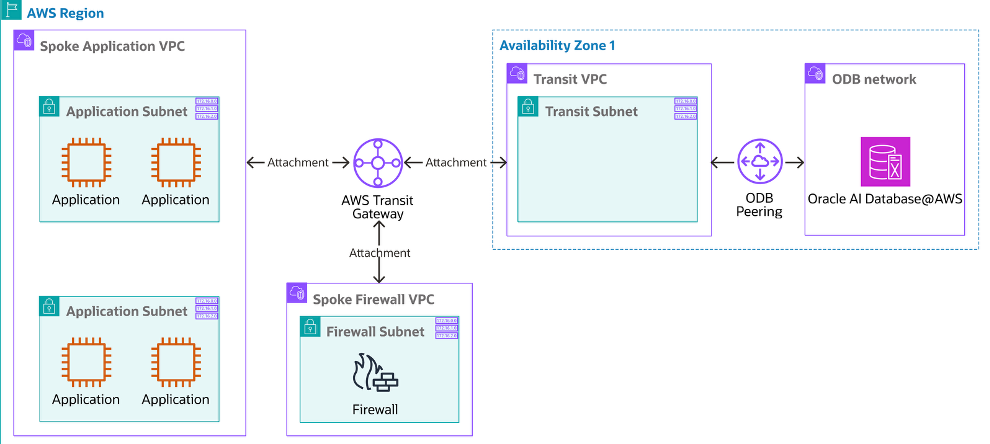

3. Hybrid Hub-and-Spoke (On-Prem ↔ AWS ↔ DB@AWS)

The AWS Pattern

TGW connects on-premises networks with multiple AWS VPCs using VPN or Direct Connect.

AWS Transit Gateway acts as a central networking hub that connects multiple VPCs (spokes) and on-prem networks using VPN or Direct connect replacing complex mesh architectures with a simpler, scalable hub-and-spoke model.

DB@AWS Perspective

DB@AWS becomes a strategic target for database modernization in hybrid environments.

Common Flows

- On-prem applications → DB@AWS

- AWS applications → DB@AWS

- Mixed workloads accessing a centralized Oracle database

Key Value

- Smooth transition from on-prem Oracle databases to DB@AWS

- Avoids cross-cloud latency (OCI ↔ AWS) by keeping workloads within AWS

- Simplifies hybrid connectivity through a single TGW hub

Practical Insight

Avoid direct on-prem to DB@AWS connectivity in hybrid regulated environments. Instead:

- Use AWS Transit Gateway as the central hub so all on-prem traffic enters AWS through a single controlled routing point

- Force on-prem → TGW → firewall/inspection VPC → DB@AWS paths using TGW route tables, so database access is never direct

- Place the Oracle DB VPC in a spoke model with no direct route back to on-prem to ensure consistent policy enforcement

- Keep on-prem connectivity centralized through TGW instead of separate VPNs / DCs into individual VPCs, which reduces complexity and bypass risk

This creates a secure hybrid hub-and-spoke model where TGW controls the path, the firewall enforces inspection, and Oracle controls protect the database itself.

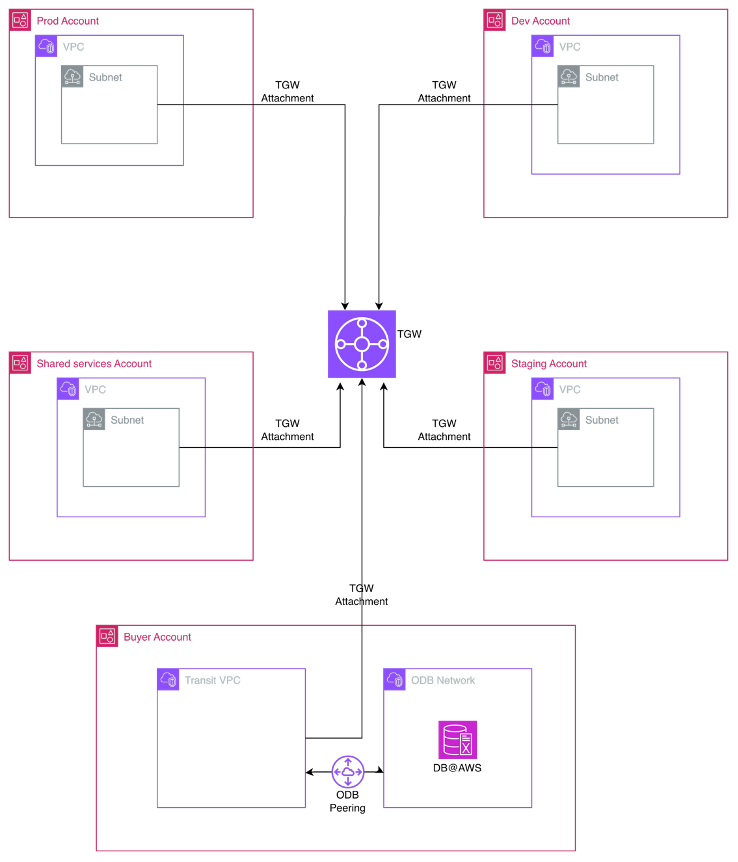

4. Multi-Account / Multi-OU Governance

The AWS Pattern

Organizations use multiple AWS accounts for isolation (Dev, Test, Prod), connected via TGW and shared using AWS RAM.

In a multi-account or cross-account AWS environment, AWS Transit Gateway (TGW) is used as a centralized networking hub typically deployed in a dedicated networking or shared services account to connect VPCs from multiple accounts

DB@AWS Perspective

DB@AWS serves as a centralized database platform across accounts.

Architecture

- TGW deployed in a shared networking account

- DB@AWS accessible from multiple application accounts

- Exadata infra structure and ODB network must be in the buyer account and shared to trust account

Key Value

- Centralized database management with decentralized application ownership

- Strong isolation between environments

- Simplified governance and auditing

Practical Insight

Design for controlled sharing, not full connectivity:

- Allow Dev/Test access only to non-production DB@AWS instances

- Use TGW to enable centralized database access from multiple application accounts, ensuring all app-to-DB communication flows through controlled routing paths

- Enable shared services integration across accounts via TGW without exposing the database broadly

- Scalable onboarding of new application accounts, where new consumers can securely access DB@AWS by attaching to TGW without modifying existing DB network architecture

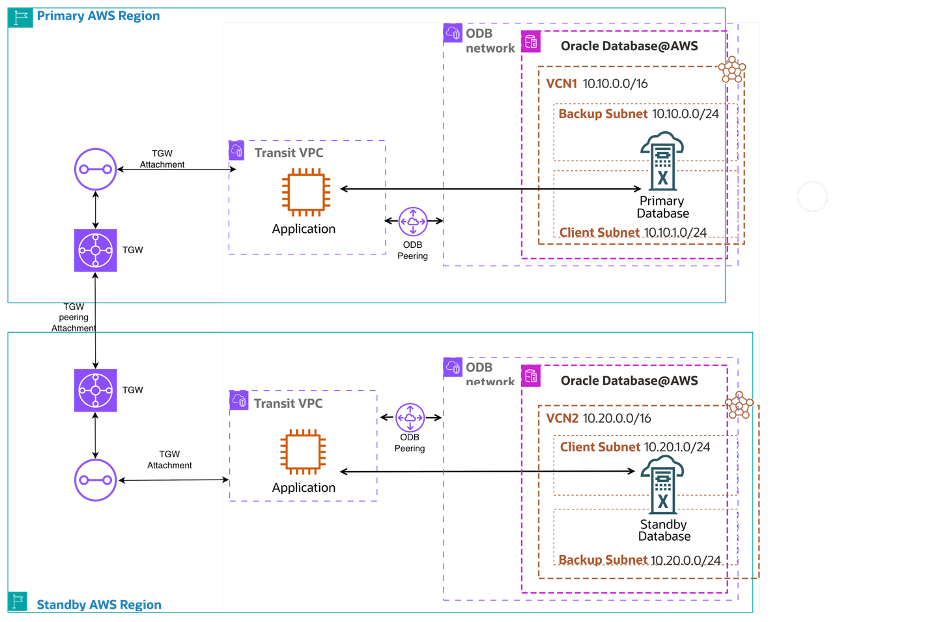

5. Oracle Disaster Recovery (DR) with TGW

The AWS Pattern

Disaster Recovery (DR) architecture on AWS, Transit Gateway (TGW) is used to provide centralized, reliable, and scalable network connectivity between primary and standby database environments, often deployed across different VPCs, accounts, or even regions by acting as a hub that connects application tiers, database tiers, and hybrid on-prem systems in a controlled manner

DB@AWS Perspective

DB@AWS integrates naturally In an Oracle Oracle DR using technologies such as Data Guard where TGW enables connectivity between the Primary and secondary.

Typical Architecture

- Primary DB@AWS in one VPC/region

- Standby DB@AWS in another VPC/region

- TGW enables controlled connectivity for replication traffic

Key Value

- High-throughput, low-latency replication

- Simplified DR topology using centralized routing

- Isolation of DR environments with controlled failover paths

Practical Insight

Avoid ad-hoc or direct primary-to-standby connectivity in Oracle DR setups. Instead:

- Use Transit Gateway as the central routing layer between primary and DR environments (across VPCs, accounts, or regions) to simplify and standardize connectivity

- Route all Data Guard replication traffic (redo transport) via TGW to ensure consistent, controllable, and observable network paths

- Preconfigure TGW route tables for failover scenarios, so application traffic can be quickly redirected to the standby DB without major network changes

- Use inter-region TGW peering for cross-region DR to maintain a clean and scalable architecture instead of managing multiple point-to-point connections

Key Takeaways

From a network architecture standpoint, the introduction of DB@AWS shifts TGW from a generic networking construct to a strategic enabler for Oracle database architectures in AWS.

Across all scenarios, TGW provides the connectivity fabric and DB@AWS provides the enterprise-grade database platform. Together, they enable scalable, secure, and governed architectures.

Design Principles

- Treat DB@AWS as a shared service

- Use TGW for segmentation and control

- Enforce security through centralized inspection where needed

- Align network design with database architecture (DR, hybrid, governance)

Final Thoughts

Rather than viewing TGW purely as an AWS networking tool, you should design with a database-first mindset when adopting DB@AWS. The combination of TGW and DB@AWS enables organizations to modernize Oracle workloads within AWS while maintaining the performance, resilience, and governance expected from Oracle environments.

The key is to bridge the gap between AWS-native networking patterns and Oracle database best practices.