Modern cloud applications are rarely deployed in a single VPC. Organizations typically separate workloads across multiple VPCs for security, scalability, and operational isolation. When using Oracle Database@AWS (ODB), networking design has previously required careful planning because of a key limitation in how ODB networks connected to application environments.

This post explains how the multi-VPC peering for ODB networks feature improves network design and operational flexibility.

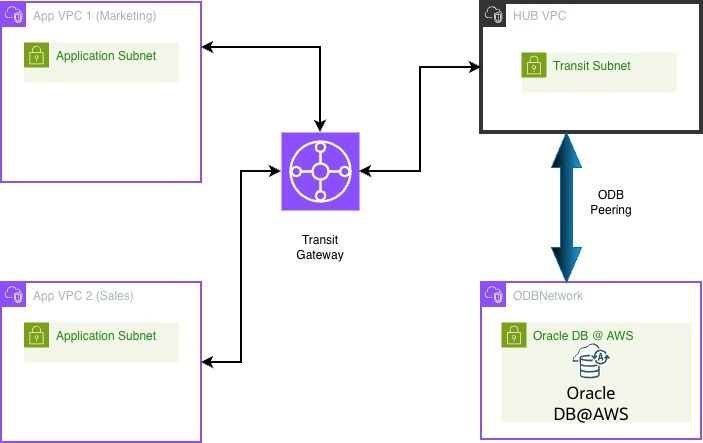

The Previous Limitation: One ODB Network per VPC

Previously, an ODB network could be peered with only one Amazon VPC. This meant that only workloads within that specific VPC could directly communicate with the Oracle Database network.

Because only one VPC could directly peer with the ODB network, organizations had to introduce an additional networking layer (HUB VPC) to enable access from additional VPCs.

Architecture Before the Feature

While effective, this architecture introduced a major challenge:

As organizations scaled to many VPCs, managing these network dependencies became increasingly complex.

The New Capability Multi-VPC Peering for ODB Networks removes the previous one-VPC limitation and allows application environments to connect directly to the database network. Using this feature we will cover three uses cases in this blog.

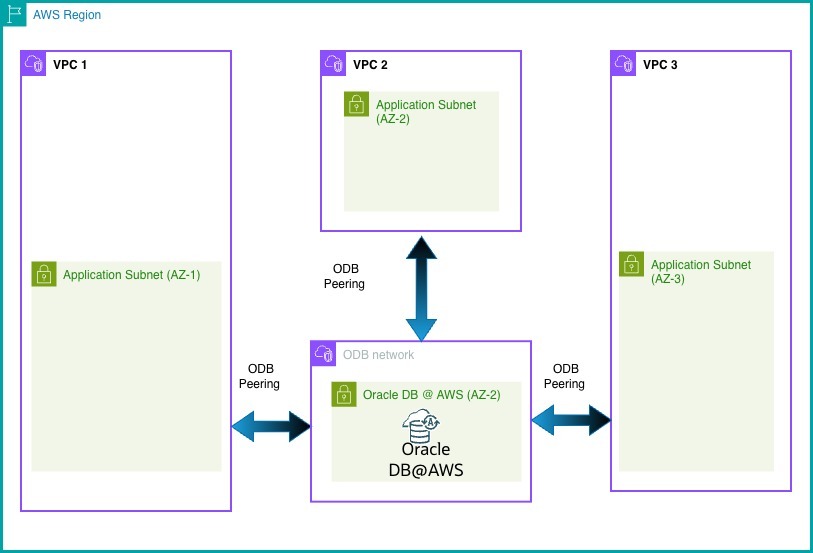

- Multiple VPC’s to peer with single ODB network

With multi-VPC peering, multiple application VPCs can directly connect to the ODB network without requiring a HUB VPC.

In this architecture, application subnets across multiple AZs (AZ-1, AZ-2, AZ-3) establish private connectivity to the Oracle Autonomous Database hosted in AZ-2. This cross-AZ communication ensures that applications are not tightly coupled to a single zone.

Even when an application runs in a different AZ than the database, it can securely and efficiently access the database over the AWS backbone network.

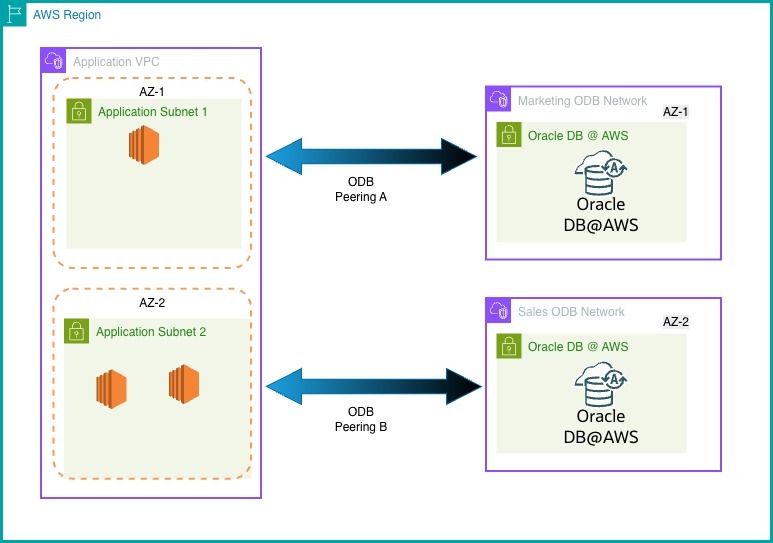

- One VPC Connecting to Multiple ODB Networks

The feature also supports the opposite architecture in which multiple ODB networks are connected to a single VPC.

This architecture enables a flexible, scalable pattern where applications can securely interact with multiple ODB networks deployed across AZ’s (AZ1 & AZ2) are peered to a single VPC (see Figure 3).

Advantages:

- High Availability Across Availability Zones

The design distributes application and database components across multiple Availability Zones (AZ1 & AZ2) part of same VPC, improving resilience and ensuring continued operation even if one zone experiences failure. - Support for One-to-Many Architectures

A single application tier can connect to multiple database deployments, making it ideal for environments with separate databases for different business units, workloads, or tenants.

Now does this feature eliminate the need for a transit VPC?

The answer is no. If connectivity is required from on-premises environments, Transit Gateway (TGW) or Cloud WAN it may require a transit VPC. Let’s understand more.

In this blog we will look at an example design using TGW and understand why Transit VPC is required.

A Transit VPC pattern can be used when leveraging TGW for ODB connectivity, enabling centralized routing for firewall-based traffic inspection or on-prem connectivity.

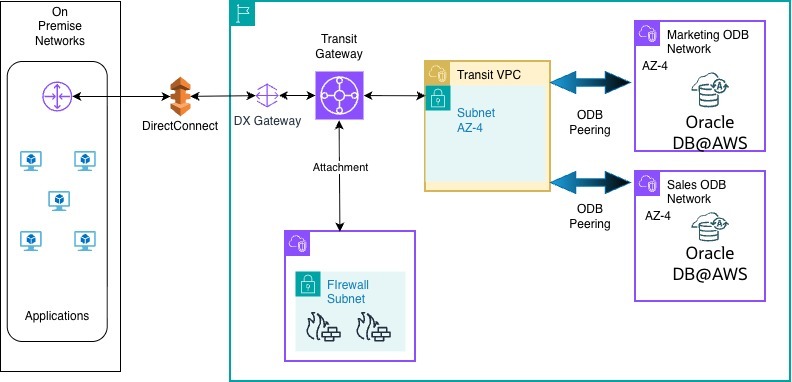

- On-prem to ODB via TGW with Multiple peering

Here on-premises applications connect to AWS via Direct Connect and into a centralized Transit Gateway. The Transit Gateway routes traffic into a Transit VPC post firewall inspection and then to Oracle DB@AWS environments via ODB peering.

The Oracle databases are deployed in the same AZ4 and are peered to single Transit VPC.

This architecture requires careful consideration of the following conditions and constraints:

Key Design Conditions

- The TGW attachment must be in the same Availability Zone as the ODB infrastructure , here it is AZ4.

- The ODB Transit VPC can have only one TGW attachment, which necessitates a dedicated transit VPC design.

Due to these constraints, it is recommended to introduce a dedicated Transit VPC to host the Transit Gateway (TGW) attachment, see Figure 4. As the Transit VPC should have a single TGW attachment and must align with the same Availability Zone as the ODB network, isolating the transit VPC helps avoid routing conflicts and scaling limitations.

This Transit VPC should be used strictly for network routing purposes and not for hosting application workloads.

Additional Conditions:

- It is required to create the TGW attachment in the ODB Transit VPC before updating peered CIDR ranges.

- There is no requirement to add routes to the Application VPC within the ODB Transit VPC.

Limitations

Due to the lack of native TGW integration with ODB networks, the following limitations apply:

- ODB networks cannot be attached directly to a Transit Gateway.

- Public DNS resolution to private IP addresses is not supported in this setup.

- No event notifications are available for ODB network topology, routing, or connection state changes.

- Multicast traffic is not supported for ODB networks via TGW.

- Transit Gateways connected to the same Transit VPC are not supported. If you need to use multiple TGWs or a combination of TGW for different connections, use a Transit VPC for each service.

Conclusion

The multiple VPC peering enhancement enables cleaner architectures, lower operational complexity, and more flexible cloud networking.

By adhering to the conditions and understanding the limitations, you can design a scalable and secure connectivity model for accessing Oracle Database@AWS environments.