Claude Mythos Preview, the associated Project Glasswing, and GPT-5.5-Cyber along with its Trusted Access for Cyber program point to a new security operating model: machine-speed vulnerability discovery, exploit validation, and attack-path chaining.

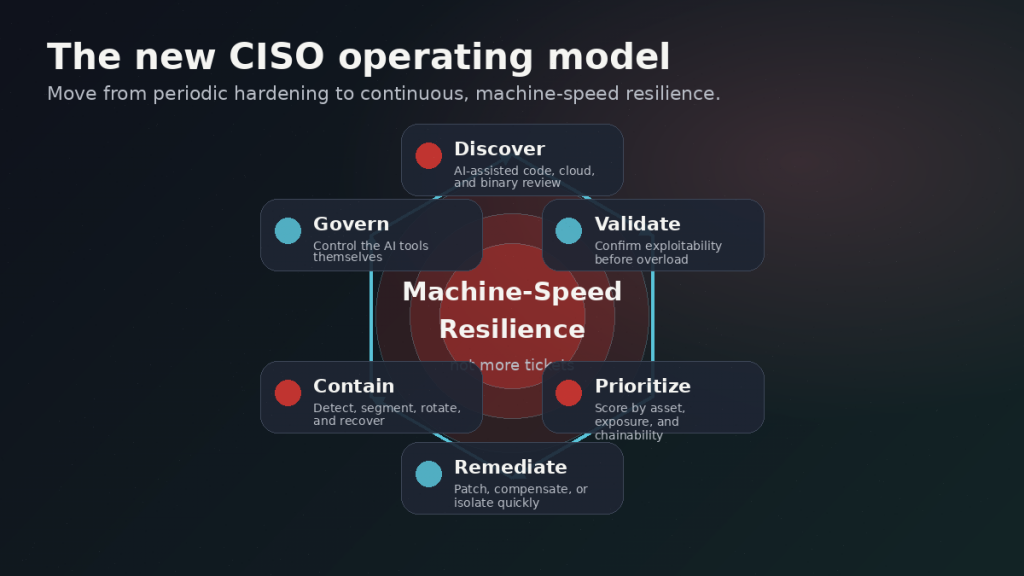

Frontier cyber models are changing the CISO threat model from human-speed vulnerability discovery to machine-speed exploit-chain reasoning.

As a former CISO, and current Field CISO, I see Anthropic’s Claude Mythos Preview, Project Glasswing, and OpenAI’s GPT-5.5-Cyber as more than another wave of AI products and related announcements. These new frontier models are indicative of the need for a new security operating model. AI systems that can be used to reason across large codebases, identify subtle weaknesses, validate exploitability, and in some cases chain multiple vulnerabilities into working attack paths completely change the game for CISO’s and their security staff.

These don’t represent just another scanner. Security teams already have scanners, fuzzers, static analysis, bug bounty programs, red teams, and skilled researchers. The shift is that frontier models are getting better at combining those pieces: reading code, forming hypotheses, running experiments, validating findings, drafting proof-of-concept paths for defenders, and connecting individual weaknesses into end-to-end intrusion scenarios.

For large enterprise environments – cloud platforms, SaaS estates, identity fabrics, databases, endpoints, developer pipelines, and third-party software supply chains – that changes the CISO playbook. The question is no longer whether AI can find bugs. The question is whether defenders can validate, prioritize, patch, segment, and contain faster than adversaries can chain weaknesses into impact.

Why Mythos and GPT 5.5-Cyber matter

Anthropic describes Project Glasswing as a controlled defensive effort that gives selected critical software organizations access to Claude Mythos Preview so they can search for and remediate vulnerabilities before similar capabilities become broadly available. Anthropic says Mythos Preview has already found thousands of high-severity vulnerabilities, including issues in every major operating system and major web browser, and that the model can identify and exploit zero-day vulnerabilities when directed by a user. [1] Anthropic has also said it does not plan to make Mythos Preview generally available while it continues work on safeguards for the model’s most dangerous outputs. [2]

Mozilla’s early experience is an important defensive signal. Mozilla reported that Firefox 150 included fixes for 271 vulnerabilities identified during an initial evaluation of Claude Mythos Preview. [3] That number is striking not because every finding is equally severe, but because it shows what happens when high-quality vulnerability discovery begins to scale.

OpenAI’s GPT-5.5-Cyber and their Trusted Access for Cyber program points in the same direction. OpenAI says it is scaling Trusted Access for Cyber to thousands of verified individual defenders and hundreds of teams, including access tiers for GPT-5.5-Cyber, a more cyber-permissive model for advanced defensive workflows such as binary reverse engineering of compiled software. [5] This confirms a broader industry trend: model providers are trying to accelerate defenders while applying stronger access controls to the most sensitive cyber capabilities.

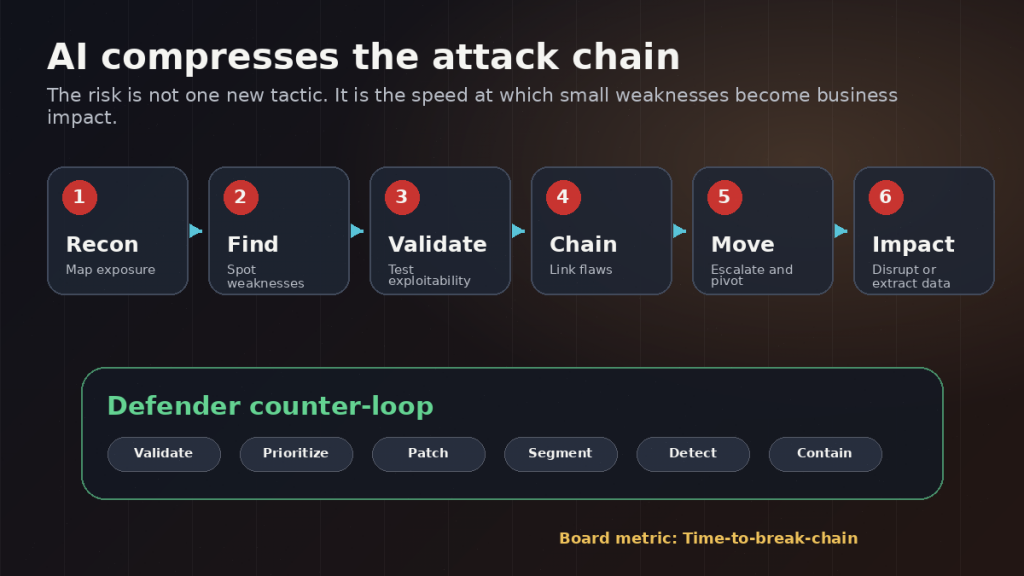

The CISO challenge is not just vulnerability volume. It is the compression of the attack chain.

The CISO nightmare scenario: exploit-chain industrialization

The most important shift is not simply “AI finds bugs.” The deeper concern is exploit-chain industrialization.

In the traditional model, a sophisticated attacker might spend weeks or months researching a target, understanding a codebase, developing an exploit, validating a privilege-escalation path, and stitching the pieces together. In the new model, AI-assisted operators can compress reconnaissance, vulnerability analysis, exploit validation, privilege escalation, lateral movement planning, and data-impact mapping into much shorter cycles.

Axios reported that early users of new Anthropic and OpenAI cyber-capable models see the biggest change in speed, scale, and the ability to turn vulnerabilities into working exploits. Early testers also emphasized that the models still work best with experienced operators and mature security harnesses, but the direction of travel is clear. [4]

The CISO problem is no longer “How many vulnerabilities do we have?” It is “Which weaknesses can be chained against our most important systems today, and how quickly can we break that chain?”

This is why CISOs should assume two things. First, capable AI-assisted vulnerability discovery will become more widely available. Second, adversaries will not wait for formal access programs. They will use jailbroken services, compromised accounts, stolen vendor access, open-source models, underground tooling, and eventually their own fine-tuned systems.

What CISOs should do now

1. Modernize vulnerability management around exploitability, not volume

AI will increase the number of findings. Raw counts will become less useful and more distracting. Security teams need validation pipelines that combine scanner output, AI-generated findings, asset criticality, internet exposure, compensating controls, exploit maturity, identity reachability, and runtime telemetry.

The operating question should be: “Which vulnerabilities are exploitable in our environment, and which combinations create a path to business impact?”

2. Invest in continuous attack-path analysis

Traditional CVSS-based prioritization is not enough when attackers can combine multiple medium-severity weaknesses into a critical compromise path. CISOs should push for graph-based exposure management, identity-path analysis, cloud entitlement review, external attack-surface management, and application dependency mapping. Stay updated with new frontier models to insure your program stays current.

The Security Leadership-level metric should become time-to-break-chain: how quickly can the organization disrupt a plausible path from initial access to business impact?

3. Reduce blast radius before AI accelerates lateral movement

If AI accelerates lateral movement, flat networks and over-permissioned identities become existential risks. Zero trust has to move from slogan to architecture: strong segmentation, phishing-resistant MFA, just-in-time access, privileged access management, service-account hygiene, workload identity controls, short-lived credentials, and rapid credential rotation.

The goal is not to prevent every initial foothold. The goal is to make every foothold small, observable, and expensive to expand.

4. Shift left – and stay shifted

If models can discover defects at scale, defenders must integrate AI-assisted security into development workflows before code reaches production. That means secure-by-default libraries, automated code review, dependency governance, fuzzing in CI/CD, software bills of materials, secret scanning, IaC policy checks, and required remediation SLAs for internet-facing and business-critical systems.

The lesson for engineering leaders is direct: security debt that once looked tolerable may become exploitable faster than the organization can respond.

5. Prepare incident response for AI-generated exploit velocity

Incident response playbooks should assume compressed timelines. Security operations teams need automated containment, high-fidelity detection engineering, canary credentials, deception assets, egress monitoring, and tested isolation procedures for crown-jewel systems.

Containment can no longer be a heroic manual exercise that begins after a meeting. It has to be pre-authorized, rehearsed, automated where appropriate, and tied to business-impact tiers.

6. Treat AI security tools as high-risk systems

A model that can find exploit chains is not just another productivity tool. It is a sensitive cyber capability. Controlled access, identity verification, logging, prompt auditing, vendor due diligence, data-loss controls, model-output review, retention policies, and abuse monitoring should be mandatory.

CISOs should know who is using these tools, what data is being sent to them, what outputs are being generated, and whether those outputs include exploit-enabling details that require special handling.

7. Govern AI agents across the software supply chain

AI coding agents, security agents, and vulnerability triage agents will increasingly touch source code, build systems, secrets, dependency manifests, cloud accounts, and incident data. That makes them part of the software supply chain. Require clear ownership, scoped permissions, approval gates for risky actions, artifact signing, sandboxed execution, and auditable change histories.

The security leadership metrics need to change

Security leadership does not need another dashboard that counts every vulnerability. They need metrics that show whether the organization can withstand machine-speed pressure. Useful metrics include:

- Time-to-break-chain: how quickly the organization can disrupt a plausible attack path.

- Exposure half-life: how quickly critical exposure declines after discovery.

- Validated exploitable findings: how many findings survive validation after AI, scanners, and telemetry are combined.

- Identity blast radius: how far a compromised user, token, key, or service account can reach.

- Critical asset coverage: whether crown-jewel systems are mapped to owners, controls, and telemetry.

- AI tool audit coverage: whether prompts, outputs, access, and data-loss controls are logged for sensitive cyber tools.

Security Leadership reporting should move from vulnerability volume to chain disruption, exposure decay, blast-radius reduction, and AI tool governance.

Bottom line

Mythos and, GPT-5.5-Cyber, and the cyber-focused models that follow are not magic. Their findings still require validation. They will generate noise. They will need mature operators, disciplined workflows, and strong safeguards.

But they are a clear warning. Cyber defense has to move from periodic, human-speed hardening to continuous, machine-speed resilience. The winners will be the organizations that can find, validate, prioritize, patch, segment, detect, and contain faster than adversaries can chain weaknesses into impact.

That is the new CISO threat model. The attackers will use AI to compress the path to impact. Defenders need to use AI, architecture, governance, and automation to block that path first.

Oracle is taking this approach and has accelerated their security patching cadence from quarterly critical patch updates (CPU) to monthly critical security patch updates (CSPU) starting this month. [6]

References

1. Anthropic, Project Glasswing: Securing critical software for the AI era, April 7, 2026.

2. Anthropic Frontier Red Team, Assessing Claude Mythos Preview’s cybersecurity capabilities, April 7, 2026.

3. Mozilla Blog, The zero-days are numbered, April 2026.

4. Axios, New AI tools speed up known hacking tactics, early testers say, April 21, 2026.

5. OpenAI, Trusted access for the next era of cyber defense, April 14, 2026.

6. Oracle, Accelerating Vulnerability Detection and Response at Oracle, April 29, 2026.