Frontier cyber models are compressing the time between vulnerability discovery, exploit validation, and attack-path chaining. CISOs should move from scanner volume to exploitability, exposure context, safe validation, and time-to-break-path.

| CISO PERSPECTIVE Frontier cyber models are compressing the distance between vulnerability discovery, validation, and exploit-chain reasoning. Vulnerability management has to move with it – from scanner volume to exposure intelligence, safe validation, and business-risk reduction. |

In my previous post, Oracle CISO Perspective: Mythos, GPT 5.5-Cyber, and the CISO’s New Threat Model, I discussed how the new frontier cyber models are changing the game for security operations and pointing to a new security operating model: machine-speed vulnerability discovery, exploit validation, and attack-path chaining. I included seven recommendations CISOs should consider as they modernize their security programs to deal with the compressed attack timeline that is upon us.

This post goes one layer deeper into what that means for vulnerability management. The goal is not simply to process more findings. The goal is to identify exploitable exposure, understand which combinations create a path to material impact, and break those paths before an adversary can automate them.

| CISO QUESTION The traditional executive question, “How many critical vulnerabilities do we have?” is no longer sufficient. The better CISO question is: Which exposures are exploitable in our environment, and which combinations create a path to business impact? |

Why vulnerability management has to change

Frontier cyber models such as Claude Mythos Preview and GPT-5.5-Cyber are not just another generation of scanners. They point to a broader shift in how quickly skilled operators can reason across code, identity, cloud configuration, exposed services, and business systems.

That changes the center of gravity for vulnerability management. More findings will arrive faster. Some will be noise. Some will be real. The differentiator will be the organization’s ability to add context, validate safely, assign ownership, and remediate in the order that actually reduces business risk.

Oracle has also publicly emphasized faster AI-enabled vulnerability detection and response, including faster delivery of critical security fixes for customer-managed environments. The broader lesson for CISOs is clear: speed is becoming a security control, not just an operational preference.

A modern vulnerability-management program should make six shifts.

1. Build an enterprise exposure graph, not just a scanner backlog

A scanner backlog is a list of symptoms. An exposure graph shows relationships. It joins vulnerability data with asset inventory, CMDB ownership, internet exposure, identity permissions, cloud entitlements, endpoint telemetry, application ownership, data sensitivity, and business criticality.

It is a living model and should answer practical questions such as: Which internet-facing systems run vulnerable code? Which service accounts can reach sensitive data? Which workloads have excessive cloud entitlements? Which vulnerable dependency is present in production, reachable from an attacker-controlled path, and attached to a revenue-generating service?

Start with the crown jewels rather than the whole enterprise. Choose the services whose compromise would create material impact: payment processing, identity platforms, customer data stores, development pipelines, SaaS control planes, regulated workloads, or operational systems. Then connect the data streams that explain how an attacker could reach them.

The useful unit of analysis is not the CVE. It is the path. Attack-path and exposure-management tools can help show how an entry point can progress toward a critical asset, but tooling will not solve poor context. A graph without identity data, internet exposure, runtime evidence, and business criticality simply produces better-looking noise.

2. Prioritize by validated exploitability, not severity alone

CVSS remains useful, but it should be one signal among many. Each finding should be enriched with CISA Known Exploited Vulnerabilities status, FIRST EPSS probability, exploit maturity, runtime presence, network reachability, compensating controls, asset exposure, owner, and business impact.

The difference matters. A medium-severity flaw on an internal test host may wait. A medium-severity flaw on an externally reachable workload, combined with an over-privileged managed identity and a sensitive database path, may become a board-level risk.

This is where the CISO narrative should change. The organization is not trying to win by closing the most tickets. It is trying to reduce the exposures most likely to become material incidents.

3. Break the path, not merely the ticket queue

Do not ask only which patch is oldest or loudest. Ask which action breaks the most credible paths to impact.

The best fix may be a patch. It may also be removing internet exposure, disabling a stale account, enforcing phishing-resistant MFA, rotating a secret, tightening a security group, segmenting a crown-jewel subnet, or converting standing privilege to just-in-time access.

| EXECUTIVE METRIC Track time-to-break-path: how quickly the organization can disrupt a plausible route from initial access to material business impact. That metric is more useful than a raw count of open critical vulnerabilities because it measures whether risk is actually being reduced. |

4. Add controlled exploit validation

Validation is the discipline that turns theoretical exposure into evidence. It should not mean distributing weaponized proof-of-concept code broadly or allowing autonomous agents to attack production. It means using approved, bounded, logged methods to determine whether a vulnerability or attack path is reachable, whether exploitation prerequisites exist, and whether preventive or detective controls work as expected.

A mature validation program should have clear tiers:

- Tier 0 – passive evidence: vulnerable package present, service exposed, affected version loaded, vulnerable function reachable, or exploitation observed in the wild.

- Tier 1 – lab reproduction: isolated testing using vendor advisories, patch diffs, containers, fuzzers, and safe test harnesses.

- Tier 2 – production-safe reachability and control validation: checks that confirm whether a service is reachable, whether controls block or detect the behavior, and whether response workflows trigger.

- Tier 3 – formal purple-team or breach-and-attack simulation: testing under written rules of engagement, approved windows, named owners, non-destructive payloads, rate limits, rollback plans, and clear stop conditions.

Security validation platforms can help institutionalize this discipline. They are most valuable when tied back to the exposure graph: select the top business-impact paths, validate the choke points, record the evidence, remediate the gap, and retest. The output should be a small set of facts: exploitable or not exploitable; blocked, detected, or missed; owner assigned; compensating control accepted or rejected; retest date set.

Frontier models can accelerate defensive validation by summarizing advisories, comparing vulnerable and patched code, proposing safe validation plans, generating detection hypotheses, correlating logs, drafting remediation guidance, and helping engineers understand why a fix matters. But they must operate inside guardrails: human approval for active tests, no unsupervised production exploitation, strict data-handling rules, prompt and output logging, separation of lab and production credentials, and review for third-party targets.

5. Move remediation closer to engineering

AI-assisted vulnerability discovery will punish quarterly cleanup cycles. Security teams need to move from after-the-fact ticket routing to engineering-integrated risk reduction.

CISOs should require secure-by-default libraries, SBOM and VEX workflows, dependency governance, secret scanning, infrastructure-as-code policy checks, fuzzing in CI/CD, AI-assisted code review, and service-owner SLAs. Findings should arrive with ownership, business context, fix guidance, exception criteria, and retest requirements.

The cultural change is as important as the tooling. Engineering teams should not receive vulnerability findings as unexplained audit demands. They should receive prioritized, contextual work items that explain the business risk, the path to impact, the required action, and the evidence needed to close the loop.

6. Govern AI cyber tools as privileged infrastructure

A model that can reason about exploit chains is not a casual productivity tool. It is privileged security infrastructure.

Access should be role-based, logged, monitored, and limited to approved defensive use. Prompts, outputs, uploaded code, exploit artifacts, and third-party data-retention terms require security review. Teams should separate lab and production credentials, define approved use cases, review generated output before action, and retain audit logs for sensitive cyber workflows.

This governance requirement will become more important as AI agents begin touching source code, build systems, secrets, dependency manifests, cloud accounts, and incident data. If an AI tool can influence the software supply chain, it needs ownership, scoped permissions, approval gates, artifact controls, and auditable change history.

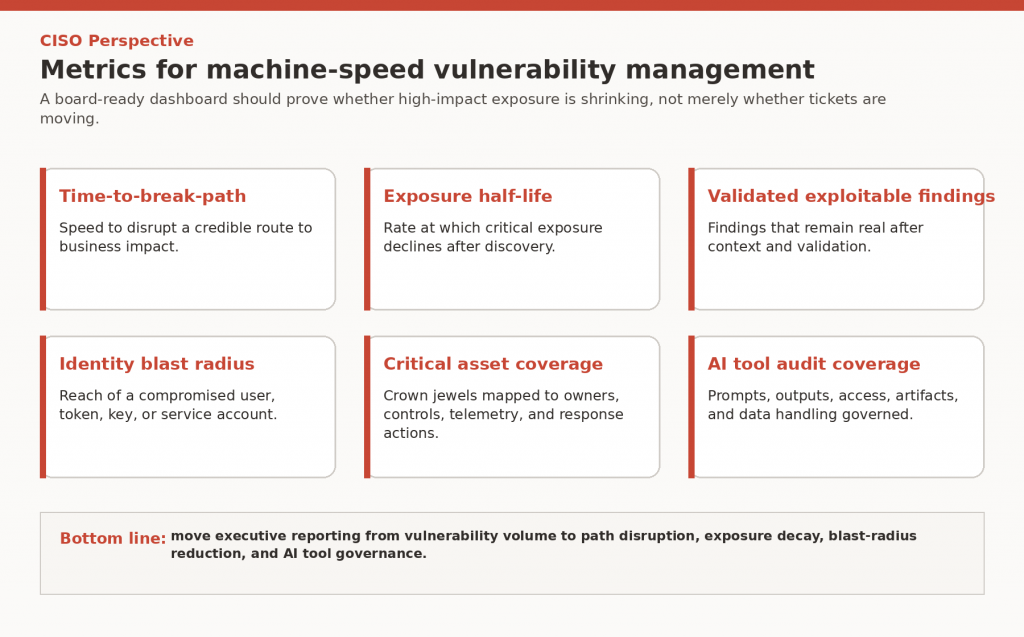

The CISO metrics should change

Security leadership does not need another dashboard that counts every vulnerability. It needs metrics that show whether the organization can withstand machine-speed pressure.

Figure 2: CISO metrics for machine-speed vulnerability management

- Time-to-break-path: how quickly the organization can disrupt a plausible attack path.

- Exposure half-life: how quickly critical exposure declines after discovery.

- Validated exploitable findings: how many findings survive validation after scanners, AI, telemetry, and business context are combined.

- Identity blast radius: how far a compromised user, token, key, or service account can reach.

- Critical asset coverage: whether crown-jewel systems are mapped to owners, controls, telemetry, and tested response actions.

- AI tool audit coverage: whether prompts, outputs, access, and data-loss controls are logged for sensitive cyber tools.

Bottom line

The winning vulnerability-management program will not be the one that patches the most CVEs. It will be the one that continuously identifies exploitable exposure, understands the combinations that matter, validates controls safely, and breaks the path to business impact before an adversary can automate it.

Frontier cyber models compress the timeline. Modern vulnerability management must compress the defensive decision cycle in response: know what matters, validate what is real, fix what breaks the path, and govern the tools that make this work move faster.

To learn more, see my previous Oracle CISO Perspective on Mythos, GPT 5.5-Cyber, and the CISO threat model blog.